Adversarial Machine Learning

The study and practice of exploiting the statistical foundations of ML models through techniques like evasion, poisoning, and extraction attacks.

Adversarial Machine Learning (AML) is the field of research and practice focused on understanding how machine learning models can be attacked, manipulated, and deceived through deliberate exploitation of their statistical foundations. Unlike traditional cybersecurity where vulnerabilities exist in deterministic code — a buffer overflow, a SQL injection, a misconfigured firewall — AML attacks target the mathematical relationship between a model's learned parameters and the data it processes. The "vulnerability" isn't a bug in the source code but an inherent property of how neural networks generalize from training data to new inputs.

AML attacks exploit a foundational assumption in machine learning known as the IID premise (Independent and Identically Distributed): that the data a model encounters during inference comes from the same statistical distribution as its training data. When this assumption holds, predictions are reliable. When adversaries deliberately violate it — by crafting inputs that fall outside the training distribution in carefully calculated ways — the model fails while reporting high confidence.

Attack taxonomy

AML encompasses several distinct attack categories, each targeting different stages of the ML lifecycle:

Evasion attacks occur at inference time. The attacker modifies input data with perturbations that are imperceptible to humans but cause the model to misclassify. White-box methods like Fast Gradient Sign Method (FGSM) and Carlini & Wagner attacks require access to model gradients. Black-box methods like Square Attack and HopSkipJump work by querying the model's API and observing outputs, requiring no internal access. In LLM contexts, prompt injection and jailbreaking are forms of evasion attack adapted for language models.

Poisoning attacks target the training pipeline. By injecting malicious samples into training data, attackers introduce dormant backdoors that activate only when specific trigger patterns appear in production inputs. Clean-label poisoning is particularly dangerous because the attacker doesn't need to mislabel samples — the poisoned data carries correct labels but subtly shifts the model's decision boundaries to create exploitable blind spots. This relates directly to training poisoning risks in deployed AI systems.

Model extraction attacks steal proprietary models by systematically querying them through public APIs and using the input-output pairs to train a functionally equivalent copy. Advanced extraction techniques can reconstruct model architectures, hyperparameters, and approximate weight distributions from API responses alone. This threatens intellectual property and enables further adversarial research against the stolen model.

Membership inference determines whether a specific data record was included in the training dataset. This privacy attack is particularly concerning for models trained on sensitive data — medical records, financial transactions, or personal communications — because confirming membership leaks information about individuals whose data was used without explicit consent.

Relevance to AI security assessments

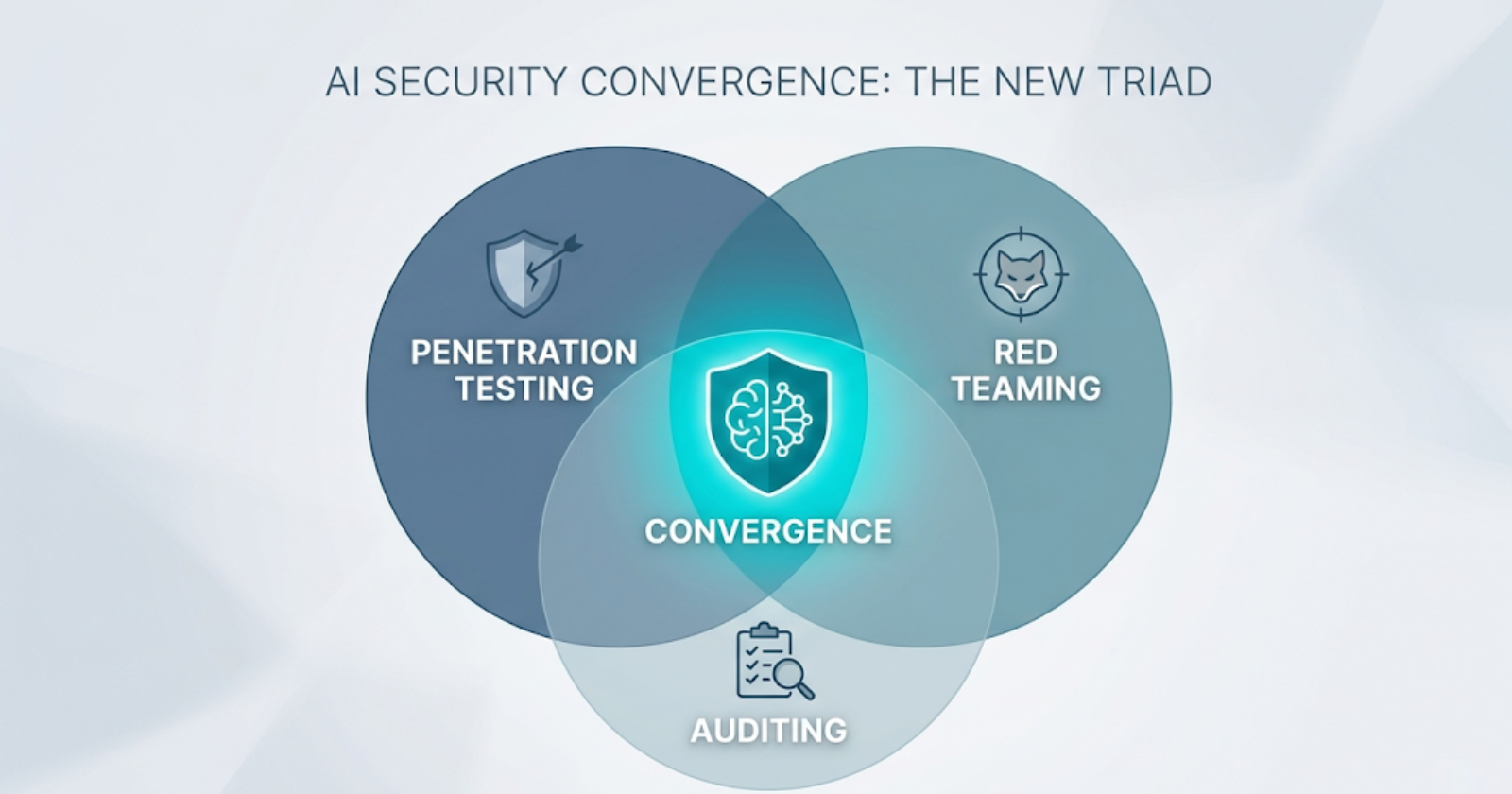

AML fundamentally changes what security assessments must evaluate. Traditional penetration testing checks for exploitable code vulnerabilities; AI penetration testing must additionally probe the model's statistical robustness against adversarial inputs. Traditional red teaming tests organizational defenses against human adversaries; AI red teaming must additionally test whether models maintain safety constraints under sustained adversarial pressure across multi-turn interactions.

The OWASP Top 10 for LLM Applications directly maps several categories to AML attack classes: prompt injection (evasion), training data poisoning (poisoning), model theft (extraction), and sensitive information disclosure (inference attacks). Organizations conducting AI security assessments should treat AML coverage as a foundational requirement, not an advanced optional module.

Defenses and limitations

Current defenses include adversarial training (exposing models to adversarial examples during training), certified robustness methods (providing mathematical guarantees within bounded perturbation radii), input preprocessing (detecting and filtering adversarial inputs before they reach the model), and ensemble methods (using multiple models to cross-validate predictions). However, no single defense comprehensively addresses all AML attack categories, and many defenses introduce tradeoffs — adversarial training typically reduces accuracy on clean data, while certified robustness methods scale poorly to large models. This limitation is why comprehensive AI security programs integrate multiple assessment methodologies rather than relying on any single technical control.

Articles Using This Term

Learn more about Adversarial Machine Learning in these articles:

Why AI security needs pentesting, red teaming, and audits together

Pentesting finds bugs, red teaming tests defenses, audits prove compliance. Learn why AI security demands all three integrated into one TEVV lifecycle.

Linear Algebra & Calculus Attack Vectors in Large Language Models

Discover how linear algebra, calculus, probability theory, and statistics create security vulnerabilities in AI systems. Learn the mathematical foundations hackers exploit to jailbreak LLMs and compromise AI models.

Related Terms

Adversarial Input

Carefully crafted input designed to cause AI models to make incorrect predictions or exhibit unintended behavior.

Training Poisoning

Attack inserting malicious data into AI training sets to corrupt model behavior and predictions.

Model Extraction

An attack that reconstructs a proprietary AI model's behavior by querying it repeatedly and training a substitute model on the responses.

Prompt Injection

Attack technique manipulating AI system inputs to bypass safety controls or extract unauthorized information.

Jailbreak

Technique to bypass AI safety controls and content filters, forcing the model to generate prohibited outputs.

Need expert guidance on Adversarial Machine Learning?

Our team at Zealynx has deep expertise in blockchain security and DeFi protocols. Whether you need an audit or consultation, we're here to help.

Get a Quote