Back to Blog

AuditWeb3 SecurityDeFiSecurity ChecklistHacks

When to audit a smart contract: The 2026 security timeline

26 min

Most teams treat the audit as a date on the calendar. A single PDF, delivered two weeks before mainnet, that converts an unaudited codebase into an audited one. Box checked. Tweet posted. Ship.

That mental model is how protocols get drained.

A smart contract audit is not a milestone. It's a series of decisions — distributed across the entire lifecycle — about when to invite adversarial scrutiny, how often, and at what depth. Get the timing wrong and you'll either pay for a $60k engagement that reviews code you've already deleted, or you'll discover a critical finding 72 hours before a launch you can't slip.

This is the timeline we'd give a founder asking us, off the record, when they should start thinking about security. It's biased toward Web3 because that's where we work, but the underlying logic — defense in depth, distributed across time — comes from the same secure-SDLC traditions (NIST SSDF, Microsoft SDL, OWASP SAMM) that govern serious software engineering anywhere.

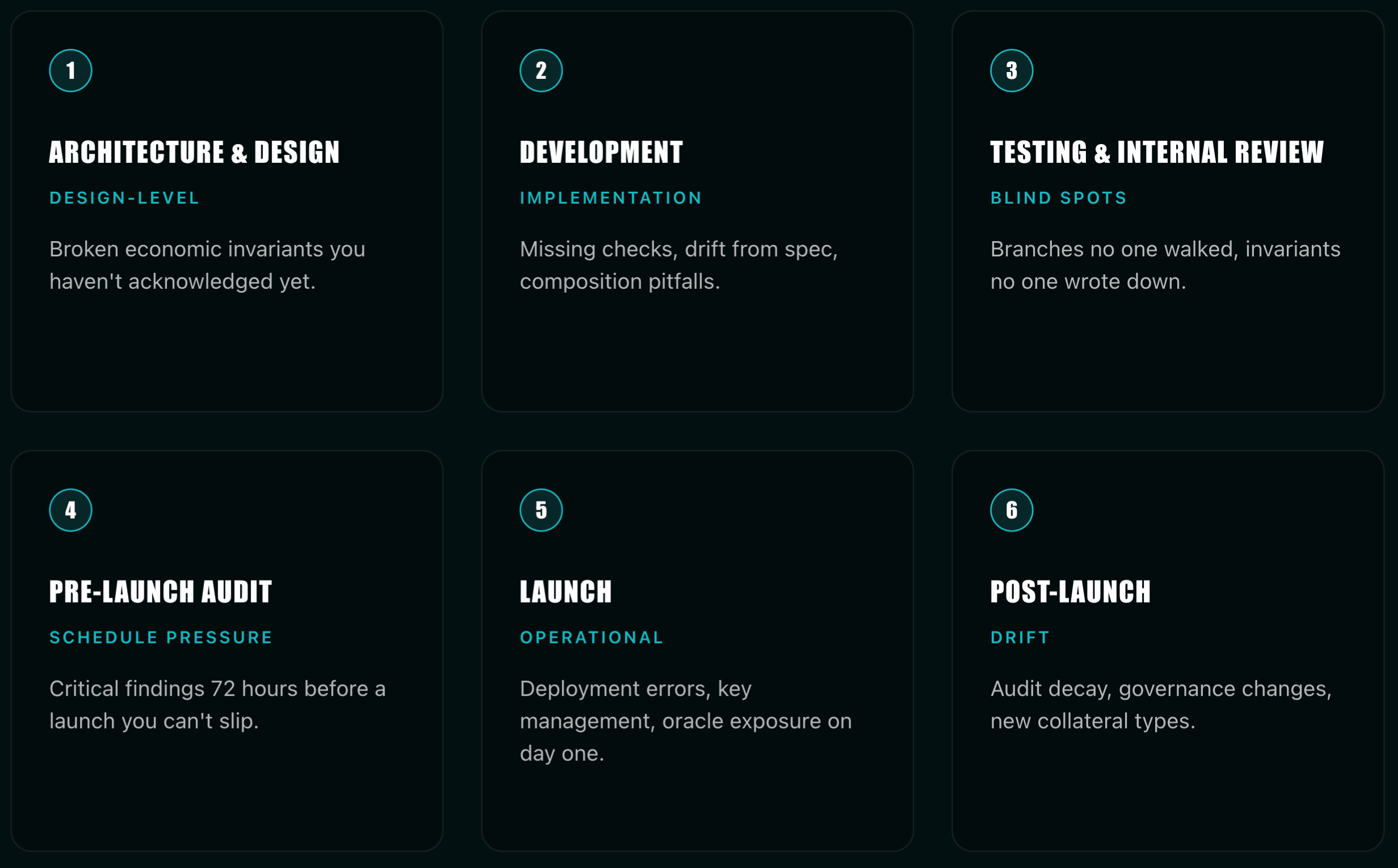

The lifecycle in six stages

Every protocol passes through the same six stages, whether the team plans for them or not.

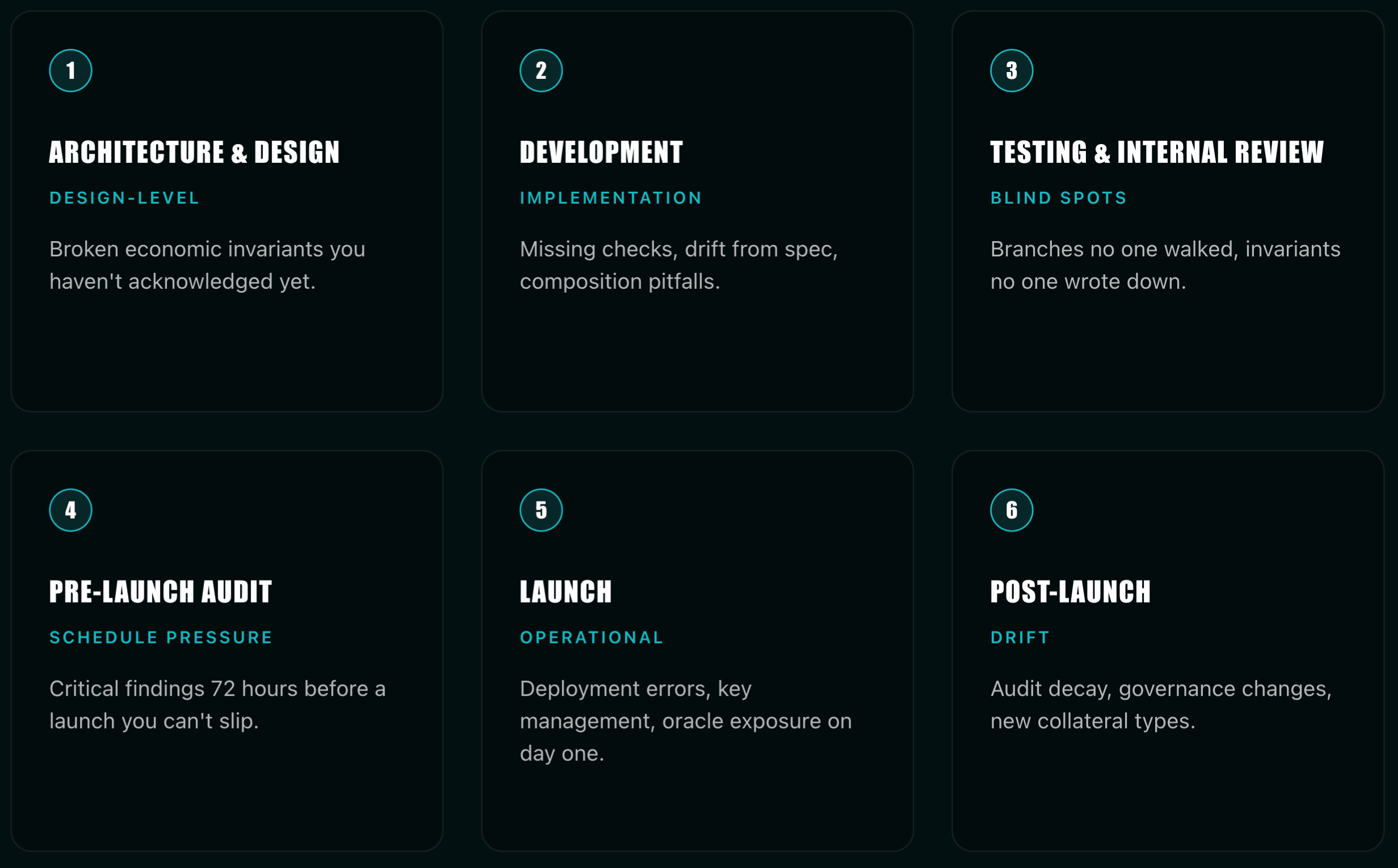

Architecture and design. Whitepaper, mechanism spec, tokenomics, threat model, trust assumptions, upgradeability strategy. The risks here are design-level: broken economic invariants, unauthenticated trust boundaries, oracle dependencies you haven't acknowledged yet.

Development. Solidity, Rust, Cairo, or Move; internal libraries; integration scaffolding. Risks shift to implementation: missing checks, drift from spec, bugs that look fine in isolation and catastrophic in composition.

Testing and internal review. Unit tests, fuzzing harnesses, invariant suites, internal review, devnet exposure. The danger is what you didn't test for — branches no one walked, invariants no one wrote down.

Pre-launch and external audit. Code freeze, manual audit, fix-review, formal verification where it applies, testnet, public bounty. This is where critical findings surface. It's also where schedule pressure becomes the largest contributor to ship-anyway decisions.

Launch. Mainnet deployment, guarded launch with caps, multisig, timelock. Risks are operational: deployment errors, key management, oracle exposure on day one.

Post-launch. Monitoring, incident response, bug bounty, upgrades, recurring audits, governance. New vulnerabilities arrive from environmental drift, MEV, oracle changes, and — most often — from your own subsequent code changes.

Smart contract protocols carry three pressures that regular software doesn't. Immutability: most bugs can't be patched silently. Composability: your assumptions break when integrators use you in ways you didn't plan for. Bearer-asset value at risk: the attacker is paid in cash, on-chain, within minutes of a successful exploit. Those three properties are why every stage below is more compressed and more expensive than its SaaS equivalent.

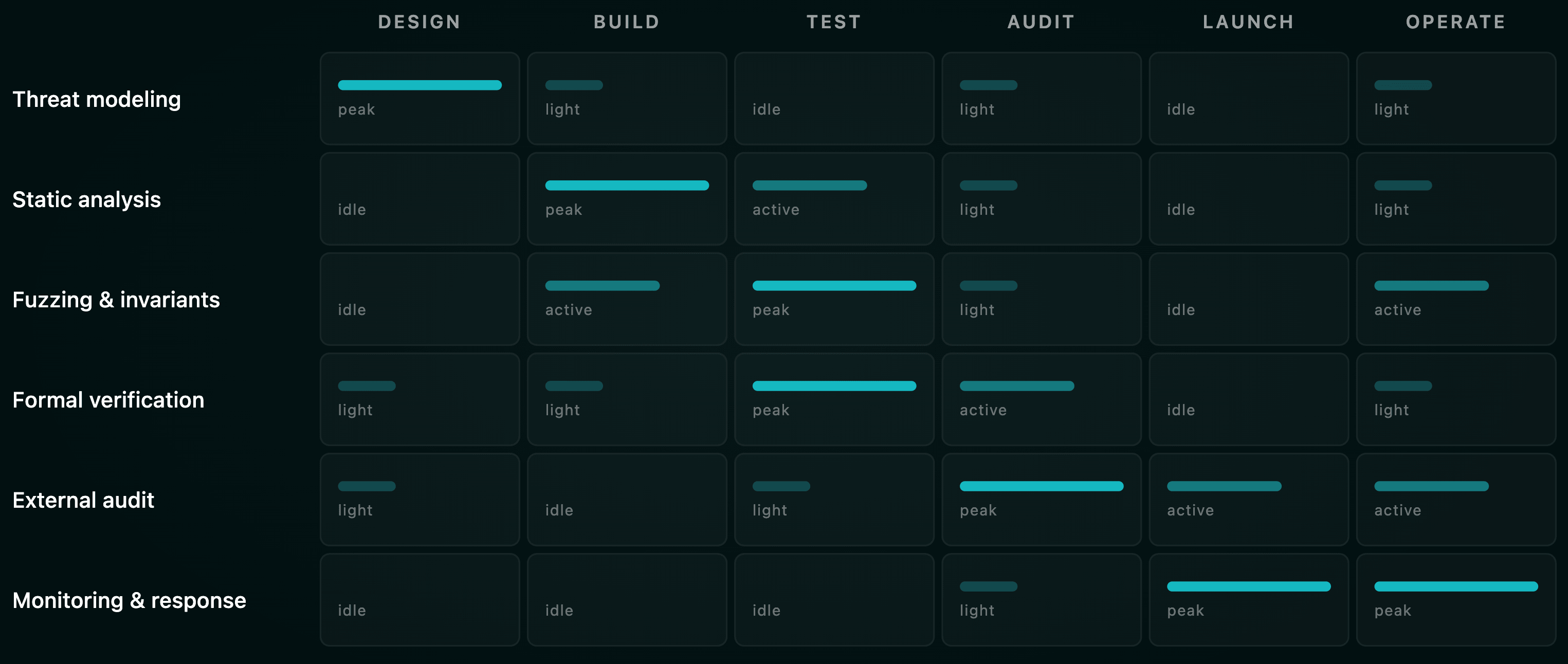

What you do, and when you do it

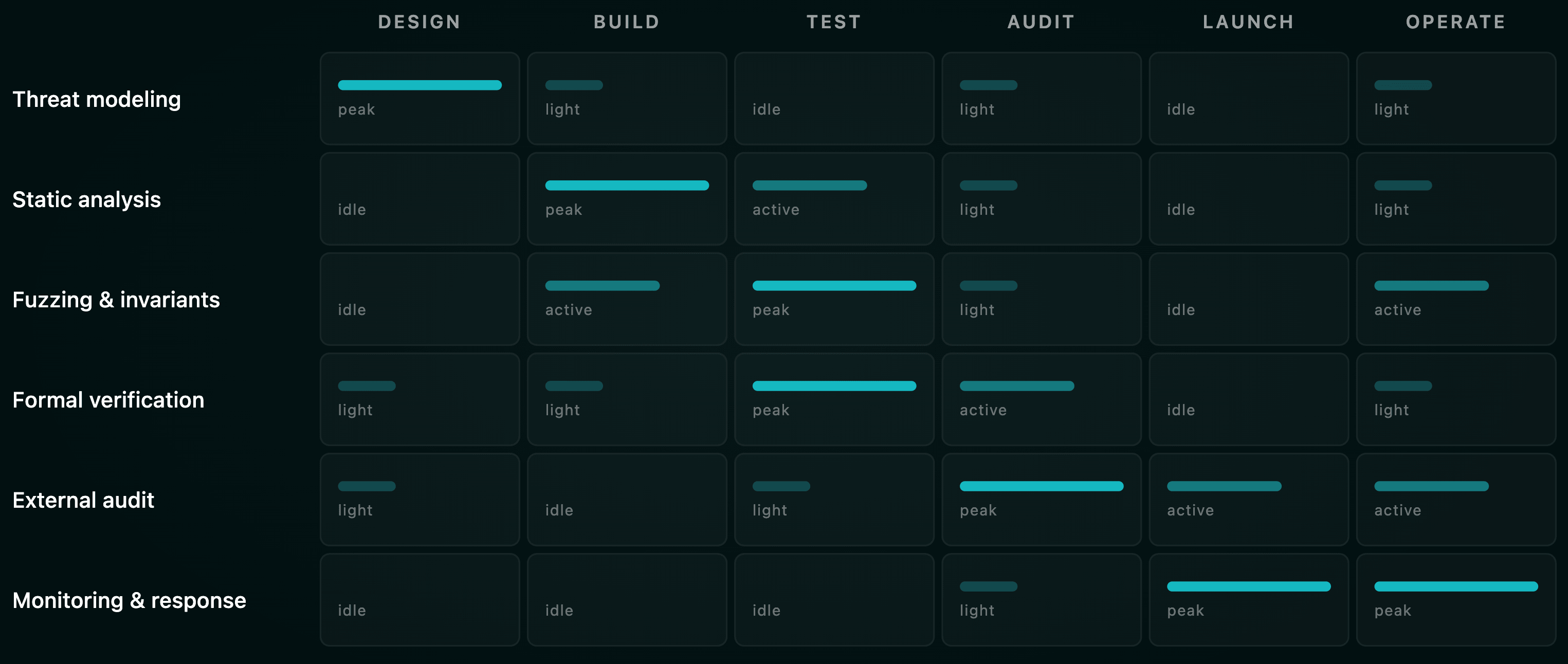

The right framing is defense in depth distributed across time. No single activity protects a protocol. Each one has a stage where it's dramatically more cost-effective than at any other stage.

At architecture and design, you do threat modeling — STRIDE-style for general components, Web3-specific for bridges, oracles, MEV exposure, and governance attack paths. You do an architecture review where a senior researcher reads the whitepaper and the contract layout, not the code, and produces trust-boundary diagrams, an invariant catalog, and an attack-tree sketch. For DeFi, you do an economic and game-theoretic review — lending curves, AMM math, liquidation mechanics, rebasing assets, and incentive tokens all have failure modes that look fine in code and catastrophic on a market stress day. And you write down your invariants. Articulating "what must always be true" up front is what makes formal verification and invariant fuzzing possible later.

During development, static analysis runs in CI. Slither and Aderyn for Solidity, Soteria for Solana, configured to block PRs on critical findings. Peer review uses security-focused checklists. Property-based unit tests target the invariants you wrote in stage one. You scan dependencies, pin OpenZeppelin versions, and watch compiler advisories — the Curve $73M Vyper-compiler exploit is a permanent reminder that your toolchain is in scope, not just your source. Multi-tool coverage in CI raises detection from roughly 40-60% (single tool) to 75-90%.

During testing and internal review, you fuzz. Echidna, Foundry's invariant testing, Medusa. Aim for 90%+ branch coverage and at least one named invariant per critical state machine. For math-heavy components — AMM curves, lending health checks, ZK circuits, bridge accounting — formal verification with Certora, Halmos, or Kontrol pays for itself. Mutation testing (Olympix) demonstrates whether your test suite would actually catch a regression — the exact gap that allowed Ronin's August 2024 upgrade misconfiguration ($12M lost) to ship. Then run an internal red team: have the engineers who won't be doing the external audit walk through the code as adversaries.

At pre-launch external audit, the headline event happens — manual review by an external firm or a competitive contest. Layer on specialized reviews where they apply: oracle integration, MEV exposure, governance, key management, proxy and upgradeability. If you have off-chain infrastructure (frontend, RPC, signer setup, deploy scripts), pen-test it. After remediation, run a fix-review or re-audit before you go anywhere near production.

At launch, you guard the launch. Deposit caps, conservative parameters, kill switch, pause guardians, timelocks on admin actions. Every constructor argument, every initializer, every proxy admin gets verified twice — Wormhole's 12M both came from missed initialization paths. Live monitoring is active on day one, not week three.

Post-launch, you run a security program. Continuous on-chain monitoring with automated pause hooks. A bug bounty sized to TVL. Recurring audits triggered by upgrades, new collateral, new chains. Red team exercises every six to twelve months. Governance review on any proposal that touches parameters, oracles, or treasury.

The external audit timeline

This is where most teams get the calendar wrong. Here's what the market actually looks like in 2026.

Lead time

Top-tier private firms — OpenZeppelin, Trail of Bits, Consensys Diligence, Spearbit, Cantina, Zellic, ChainSecurity, Halborn, Sigma Prime, Certora, Runtime Verification — quote booking queues of roughly four to twelve weeks from inquiry to kickoff. Elite-only engagements regularly run into multiple months.

The practical heuristic: reach out to two or three firms about three months before your target audit start. Hold the slot six to eight weeks before code freeze, even if scope isn't 100% locked. For a Q4 mainnet, start firm conversations no later than late Q2.

Competitive platforms — Code4rena, Sherlock, Cantina, CodeHawks — don't have a queue in the same sense. They run on fixed contest windows of one to four weeks that you slot into. This is appealing for teams who learned about audit lead times the hard way, but you still need one to two weeks of pre-contest preparation to scope, document, and onboard wardens.

Duration and pricing

The "price per LOC" model is dead. Modern auditors price on logic density and engineer-weeks. Public references from governance-funded engagements (the Arbitrum R&D Collective process, retainer disclosures) give consistent numbers.

A simple ERC-20 or vesting contract under 500 lines: one to two auditors, three days to a week, roughly 15k at tier-1 rates. A standard DeFi primitive of one to three thousand lines: two auditors, one to two weeks, 60k. A mid-size DeFi protocol around 2,500 lines with novel logic: two auditors, four to six weeks plus one to two weeks of re-audit, 300k. A complex multi-contract, cross-chain, or ZK system: three to four auditors, four to eight weeks plus re-audit, 500k+. An enterprise bridge or L1 client: four or more auditors, two to six months, 2M+.

Public rate cards from the same source set: Trail of Bits and OpenZeppelin both quote 20k/week with a documented quality floor of about three weeks per 1,000 lines. Dedaub charges 32.5k-130k for ten audit-weeks (400 hours, four auditors). Certora's 2025 engagement with Aave for v4 formal verification was 554,400 for 24 weeks.

Sherlock's industry overview puts the realistic range at 15k for simple tokens, 60k for standard DeFi, and 40k-$100k for a serious initial audit, plus 20-30% for re-audit.** For a deeper breakdown of these numbers, see our 2026 audit pricing guide and the auditor-day math in what smart contract audits actually cost.

Buffer time

A common, painful mistake: scheduling the audit to end the same day as launch. Don't. Build the buffer:

- Two to four weeks between audit kickoff and report delivery, for typical scope.

- One to three weeks between report and remediation, depending on findings.

- Three to seven days for fix-review or re-audit by the same firm.

- One to two weeks of testnet exposure with the final code — the version that will deploy.

- A public bounty or audit contest on the post-fix code for one to four weeks before mainnet, when budget allows.

Plan for four to eight weeks between audit start and mainnet for a standard DeFi protocol, eight to sixteen weeks for anything bridge-grade or financially complex. If your launch slips, that's a normal cost of doing business, not a failure.

Code freeze

Auditors expect a code freeze at audit start: a specific commit hash, a documented scope, and a public commitment that the team won't push functional changes during the audit. Allowed during a freeze: doc updates, comments, test additions. Not allowed: refactors, new features, scope changes, or "while we're at it" tweaks. Every change after freeze either invalidates findings or burns auditor hours rediscovering already-reviewed paths. If you must change something mid-audit, communicate immediately and expect timeline slippage. Our pre-audit checklist covers what to lock down before the freeze starts.

What actually drives cost

Beyond LOC: logic density (a 500-line ZK circuit costs more than 2,000 lines of token boilerplate), codebase size, language premium (Rust, Move, Cairo, and Vyper carry roughly a 20-30% markup because the auditor pool is smaller), urgency tax (two-week turnaround on a complex protocol typically adds 20-50%), firm reputation, scope (smart contracts only or full stack including frontend, RPC, bridge relayers, governance UIs), and documentation quality (strong specs and 90%+ test coverage can reduce final cost by 15-25%).

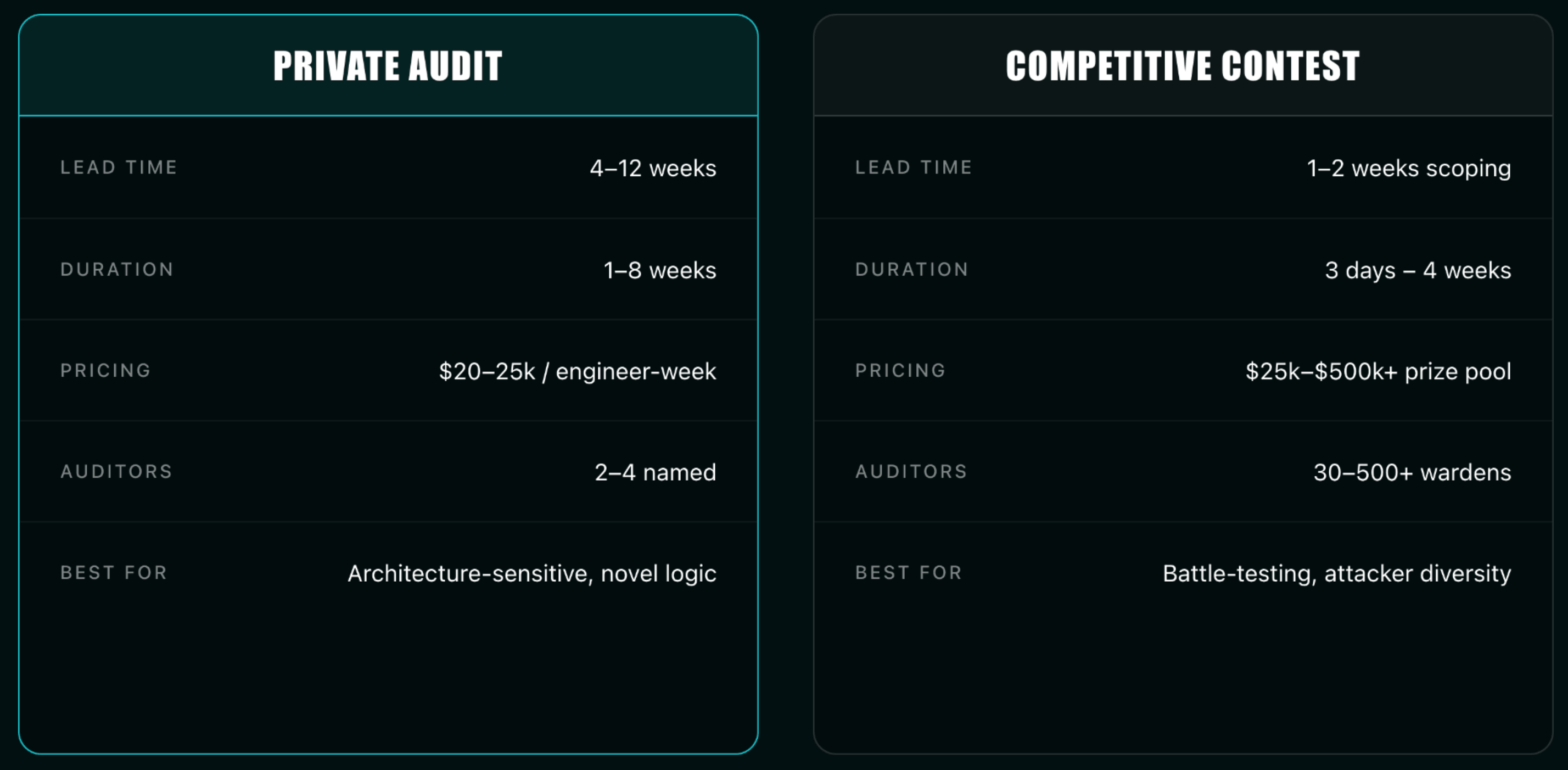

Private audit vs. competitive contest

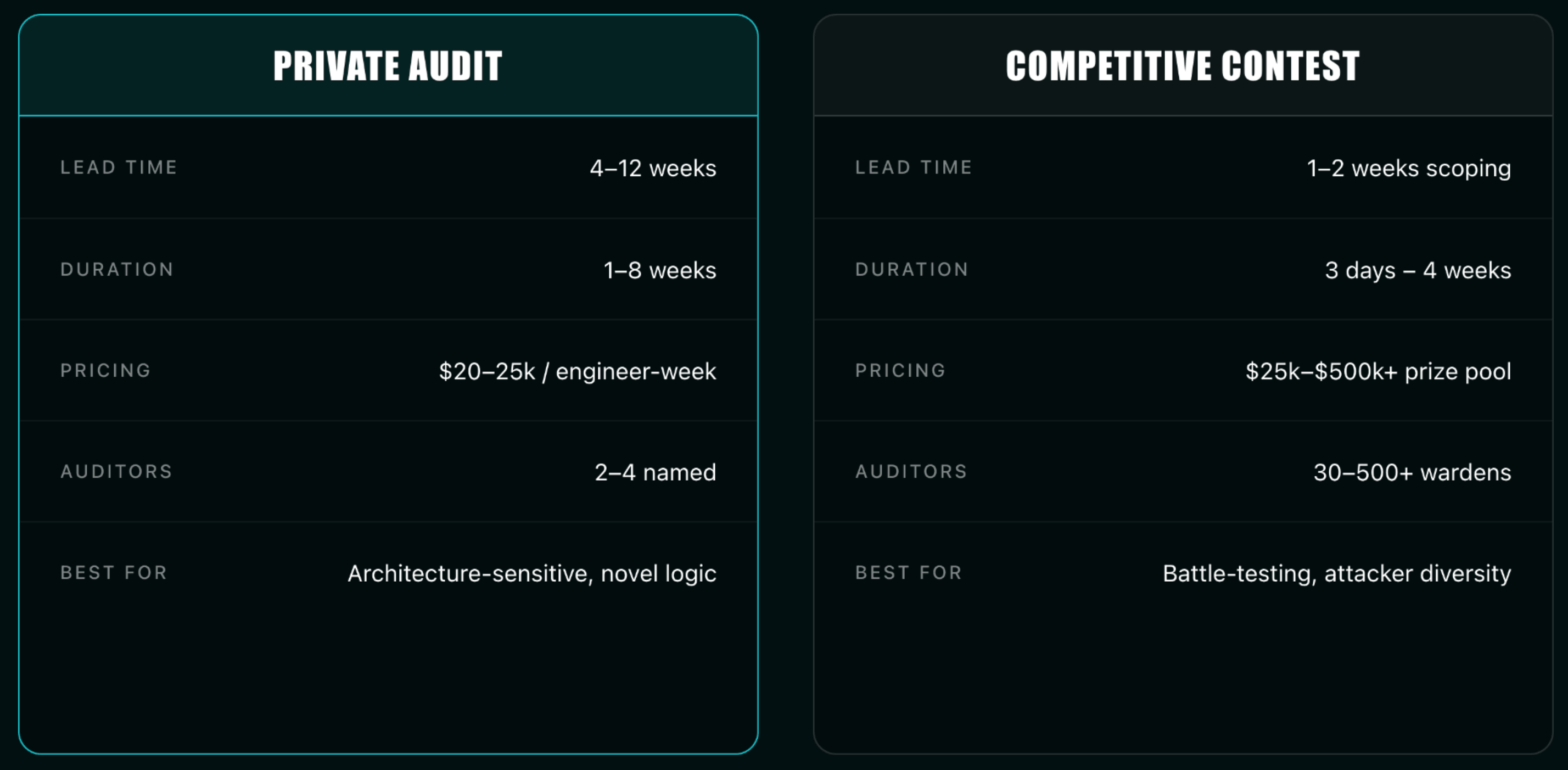

Private firm audit: lead time of 4-12 weeks, duration 1-8 weeks, priced in engineer-weeks at 25k/wk for tier-1, two to four named auditors, deep and methodical coverage, real-time dialogue with engineers, formal report. Best for architecture-sensitive code, novel logic, anything that requires conversation with the engineers.

Competitive contest: 1-2 weeks scoping followed by a fixed contest window of 3 days to 4 weeks, priced as a prize pool (500k+), 30 to 500+ wardens, wide and competitive coverage, public report after triage and judging. Best for battle-testing already-mature code, attacker diversity, harder-to-find issues.

The serious answer is to layer them. Private audit first for depth and dialogue. Contest second for breadth and adversarial diversity. Bug bounty third for continuous coverage. The Ethereum Foundation's recent Fusaka stress test on Sherlock — a $2M contest with 510+ researchers that surfaced four high-severity findings — is the textbook example of we already audited internally, now go try to break it in public.

Continuous and milestone-based: how mature teams actually work

The one-and-done audit is the security equivalent of a single annual physical for a sixty-year-old marathon runner. It misses everything that changes between visits.

The recommended cadence for a serious DeFi protocol involves five touchpoints. Architecture review before substantial code is written, producing a trust model, threat model, and invariant catalog (one to two engineer-weeks). Mid-development checkpoint when about 60-70% of code is written and interfaces are stable, catching design drift early (one to three engineer-weeks). Final pre-launch audit on frozen code (the big one). Fix-review or re-audit within one to four weeks of remediation. Post-deploy audit of the actually-deployed bytecode and configuration — often the cheapest audit on the list and the one most teams skip.

This staging is exactly the structure SAMM's Verification practice and the SSDF's "Produce Well-Secured Software" group point at. It's also what OpenZeppelin's Secure Smart Contract Development Roadmap prescribes. We expanded this thinking into an engineering workflow in our defense-in-depth audit playbook.

The shift from event to program is happening industry-wide. OpenZeppelin's incubation of Forta, the launch of Defender as an end-to-end developer security platform, Sherlock's framing of audits as an ongoing investment rather than a one-time cost — all point at the same thing. A program looks like CI-integrated SAST and fuzzing on every PR; property tests and invariants maintained as living artifacts; monthly or quarterly retainers with a security firm; a bug bounty live from day one of mainnet; continuous on-chain monitoring with automated response; and triggered audits for any change touching value-bearing code.

"Shift left" is the discipline of moving security checks earlier in the SDLC. In Web3 it means Slither, Aderyn, and Mythril running in CI as PR checks; Echidna and Foundry invariant tests in the pre-merge test suite; threat-modeling review as a required step for any new contract module; and formal verification written alongside the code, not bolted on afterward.

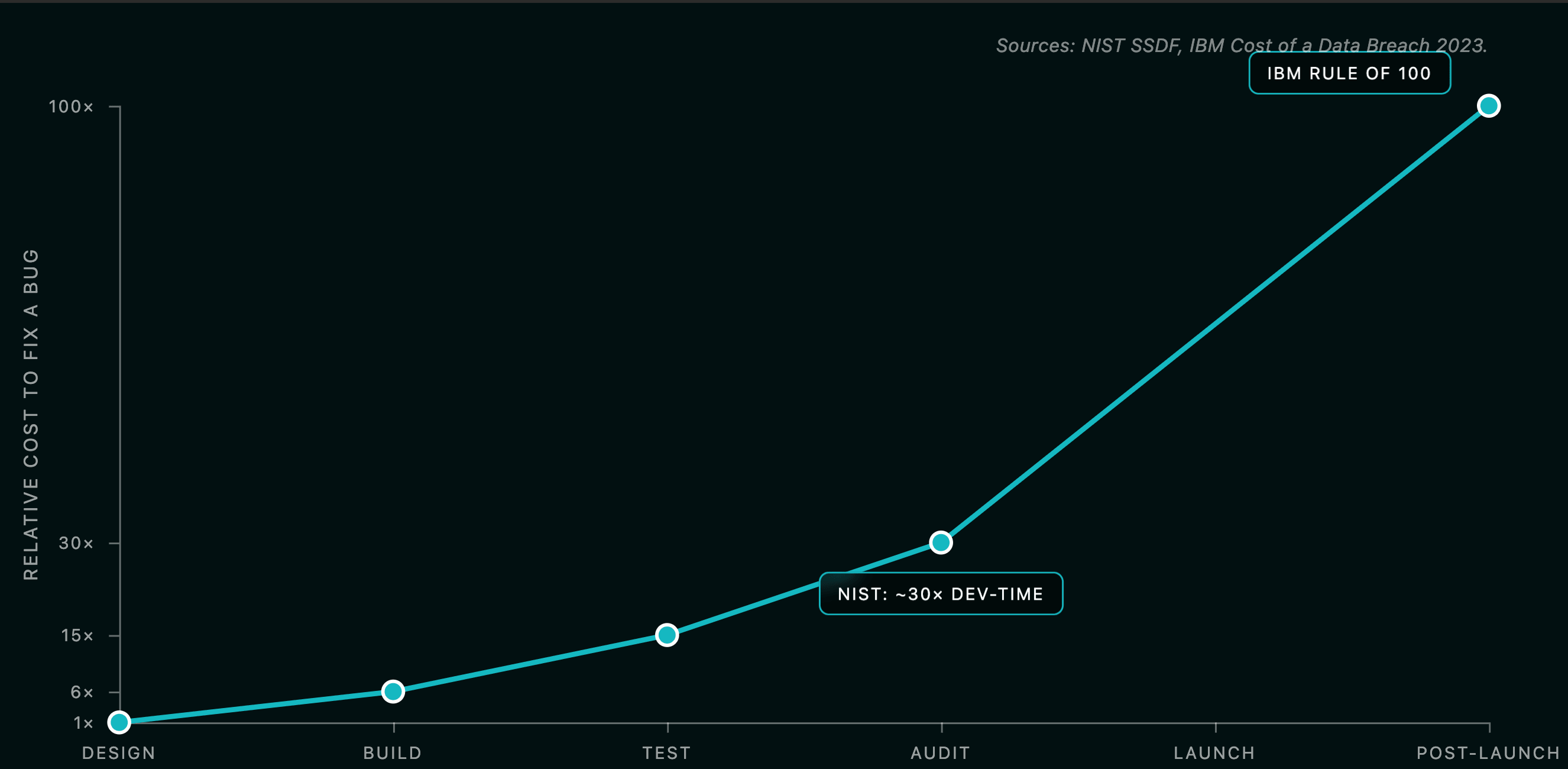

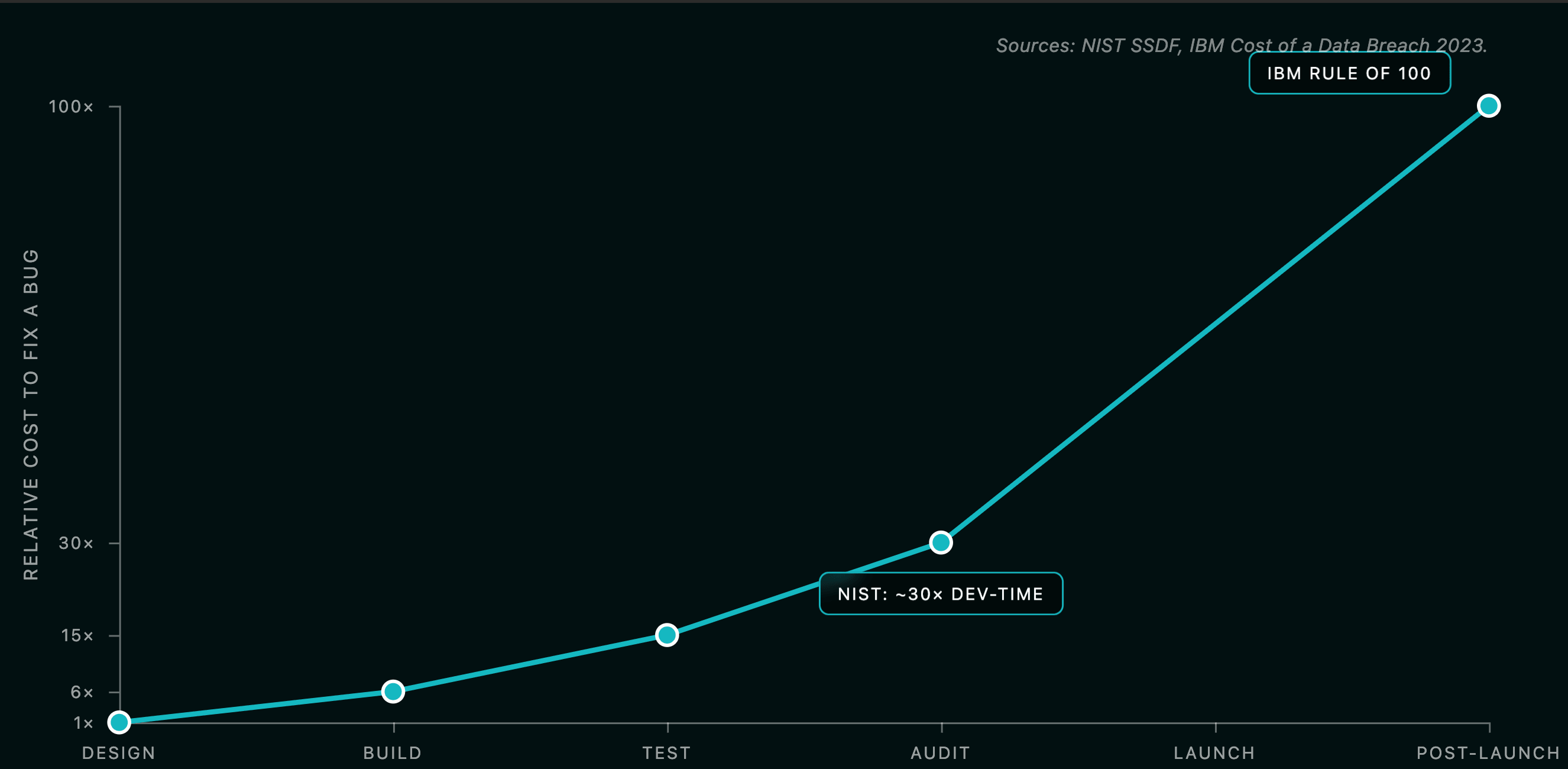

The economic argument is well-known. NIST estimates fixing a vulnerability in production is roughly thirty times more expensive than fixing it during development. The IBM "Rule of 100" — design fix 1x, implementation 6x, testing 15x, post-release 100x — is widely cited and equally widely critiqued for thin original sourcing, but the qualitative pattern is supported by every modern analog. Veracode found average remediation time grew from 59 days in 2010 to 171 days in 2019. HackerOne case studies show pre-merge fixes typically taking 30 minutes vs. 15 hours in production. In smart contracts the multiplier is sharper still, because production is immutable, observable to attackers, and often holds bearer assets. A bug fixed in design costs hours of researcher time. The same bug fixed after exploitation can cost the entire protocol — see the case studies in our 2025 exploit lessons write-up.

And audits are one layer. A modern Web3 security stack contains secure-by-default libraries (OpenZeppelin Contracts, Solady, Solmate); static analysis in CI (Slither, Aderyn); property-based and invariant tests (Foundry, Echidna, Medusa); formal verification (Certora, Halmos, Kontrol, K); manual external audit, private and contest; bug bounty (Immunefi, Cantina, HackenProof); runtime monitoring (Forta, Defender, Hypernative, Hexagate); automated incident response (pause guardians, circuit breakers, timelocks); and governance hardening (multisig, timelock, off-chain signature schemes). Skip any one of these and your "audited" protocol is still under-defended. We compare the audit and insurance layers in detail in audit vs DeFi insurance.

The Euler Finance exploit in March 2023 (197M flash-loan attack went undetected. The lesson isn't "Euler skipped audits." The lesson is that audits without continuous coverage of subsequent code changes leave you exposed. Our post-audit security playbook walks through exactly which controls close that gap.

Mistimed audits: the real cost of getting the calendar wrong

Auditing too early. Symptoms: significant code churn during the audit, auditors flagging issues in code that no longer exists, the team re-explaining context every other day, the final report referencing functions you've since removed. Cost: the full audit fee for materially less assurance than a properly timed audit. Re-audit becomes mandatory. Net waste of 30-60% of the engagement.

Auditing too late. Symptoms: critical findings landing days before launch, founder pressure to ship anyway and patch later, rushed remediation, re-audit squeezed into a weekend. Cost: either you launch with known unfixed criticals (existential risk) or you slip the launch and absorb the marketing and reputational hit. Ronin Bridge's August 2024 upgrade that lost 12M cost dwarfs the $5-10k they would have spent on tooling.

One-and-done mentality. Symptoms: protocol audited at launch, then runs for 18 months while shipping new collateral types, new chains, and new oracle integrations, none of which see an auditor. Eventually exploited. This pattern is responsible for a meaningful share of post-launch DeFi losses. Euler is the canonical case.

Get the DeFi Protocol Security Checklist

15 vulnerabilities every DeFi team should check before mainnet. Used by 30+ protocols.

No spam. Unsubscribe anytime.

Audit theater. Symptoms: cheapest possible audit, "audited by ___" badge added to the website, no remediation visible in the report, scope conveniently excluding the riskiest contracts. Real cost: false confidence. Investors and users assume risk has been managed when it hasn't. The 2024 hacks of $2.2B+ in stolen DeFi funds disproportionately hit "audited" projects whose audits were narrow or shallow. The investor side of this story is in smart contract audit ROI for investor due diligence.

A few exploits where timing or scope mattered:

- Ronin Bridge ($624M, March 2022): validator key compromise. Not a smart contract bug per se, but the multi-sig threshold (5-of-9) and the unrevoked Axie DAO whitelist were governance and key-management issues that an ongoing operational security review would have flagged. A "checked the contracts once at launch" mentality cannot catch this.

- Wormhole ($326M, February 2022): missing signature verification on a Solana sysvar account. Modern Solana audit practices — Soteria static analysis, exhaustive account-validation checklists, adversarial fuzzing — catch this kind of bug. The audit at the time didn't include those steps. We wrote up the modern checklist in our Solana 2026 security guide.

- Nomad Bridge ($190M, August 2022): faulty initialization (zero merkle root accepted as valid) shipped in a routine upgrade. Anyone could replay messages by copying the exploit transaction. The "first crowd-looted hack."

- BadgerDAO ($120M, December 2021): frontend supply-chain compromise, not a smart contract bug. A pure smart-contract audit could not have caught this. An end-to-end review including frontend, deploy pipeline, and operational practices would have. See supply chain attacks in Web3 for the broader pattern.

- Curve Finance ($73M, July 2023): a Vyper compiler bug that broke

@nonreentrantin deployed bytecode. Reminder that your toolchain is in scope, not just your source.

The cost curve, in numbers: NIST puts post-production fixes at ~30x development-time fixes. IBM's "Rule of 100" places design at 1x, implementation at 6x, testing at 15x, post-release at 100x. IBM's 2023 Cost of a Data Breach Report puts the average breach at 9.44M in the US. DeFi-specific: 2.2B in 2024 alone. Audit pricing: 500k+ for a complex bridge — even a high-end audit is typically less than 1% of the value it protects. The full ROI math is in how audits boost gas savings and market cap.

The asymmetry is stark. A 100M+ exploit. That's a 1000x return, and it's why mature treasuries budget audits as recurring opex, not one-time capex.

After mainnet: the timeline that actually matters

Most security writing treats launch as the finish line. It's the starting line. Mainnet is the first day your code is in the dark forest.

Recurring audits. Any change to a contract holding user funds gets reviewed. New collateral, new oracle integrations, new chain deployments, new vault strategies, new governance modules — all in scope. Lightweight reviews (1-3 engineer-weeks) work for incremental changes. Full audits are warranted for protocol-version bumps. Retainer arrangements (Venus + OpenZeppelin: 2.39M for ~4.5 FTE-years on v4) are how serious protocols structure this.

Continuous monitoring. Deploy on day one, not as an afterthought. Forta runs real-time detection bots on a decentralized scanner network, adopted by dYdX, Balancer, and Compound, with free public alerts and private bots. OpenZeppelin Defender provides Sentinels (custom condition monitors), Autotasks (automated response), Forta integration, and Relayers for transaction execution — the integration of choice for alert + auto-pause pipelines. Hypernative is an ML-based predictive platform across cyber, economic, governance, and community threats. Hexagate is enterprise-grade with strong attack-pattern detection. Chaos Labs handles economic and parameter risk. Tenderly Alerts, Phalcon, and Spotter are useful supplementary tools.

A typical setup: Forta detection bot fires an alert → Defender Sentinel picks it up → Defender Autotask calls

pause() on the affected contract via a Relayer with a pre-authorized role. End-to-end pause within seconds.Bug bounty. Industry-leading platforms are Immunefi (currently securing >10M in W tokens for Tier 1; most large DeFi caps criticals at 2.5M, often with a percentage-of-funds-saved formula; smaller protocols set 500k caps with minimum guaranteed rewards of $5k+. Operational SLAs: response within 24 hours, triage within 72 hours, payout within 14-30 days of severity confirmation.

Incident response planning. Have these in place before mainnet, not after the first incident. A war-room runbook with named pagers, key holders, exchange contacts, and social handlers. Pause and kill-switch authority with documented roles, multisig-controlled. A white-hat rescue strategy: pre-defined process for we found the bug, do we white-hat-front-run our own protocol? Communication templates for Twitter, Discord, governance forum. Centralized exchange contacts to freeze stolen funds. A post-mortem commitment within 7-14 days.

The Euler post-mortem ("War & Peace: Behind the Scenes of Euler's 240M from the attacker came from a combination of on-chain negotiation, public bounty escalation, and law-enforcement pressure.

Red team and war games. Every six to twelve months for live protocols holding non-trivial TVL. Goals: stress-test the IR runbook, find configuration drift, identify governance and key-management decay. Cyfrin, Trail of Bits, Halborn, and SlowMist all offer structured engagements.

Circuit breakers. Non-negotiable for any protocol with more than $10M TVL. Pausability on functions that move user funds. Pause guardian role separated from upgrade admin (least privilege). Timelock on parameter changes and upgrades — typically 24-72 hours for routine changes, longer for treasury moves. Multisig (Safe) for admin actions, with off-site key custody and rotation policies. Withdrawal limits (rate-limited bridges; Wormhole's "Governor" caps critical-vuln payouts at 10% of extractable value over 24 hours).

Governance. A surprising fraction of post-launch losses come from governance, not development. Voter apathy enabling governance attacks. Flash-loan-driven proposal passage. Compromised multisig signers. Missing or insufficient timelock on parameter changes. Oracle reconfigurations that change risk profile silently. Treat governance like a contract: review proposals, audit parameter changes, monitor delegations.

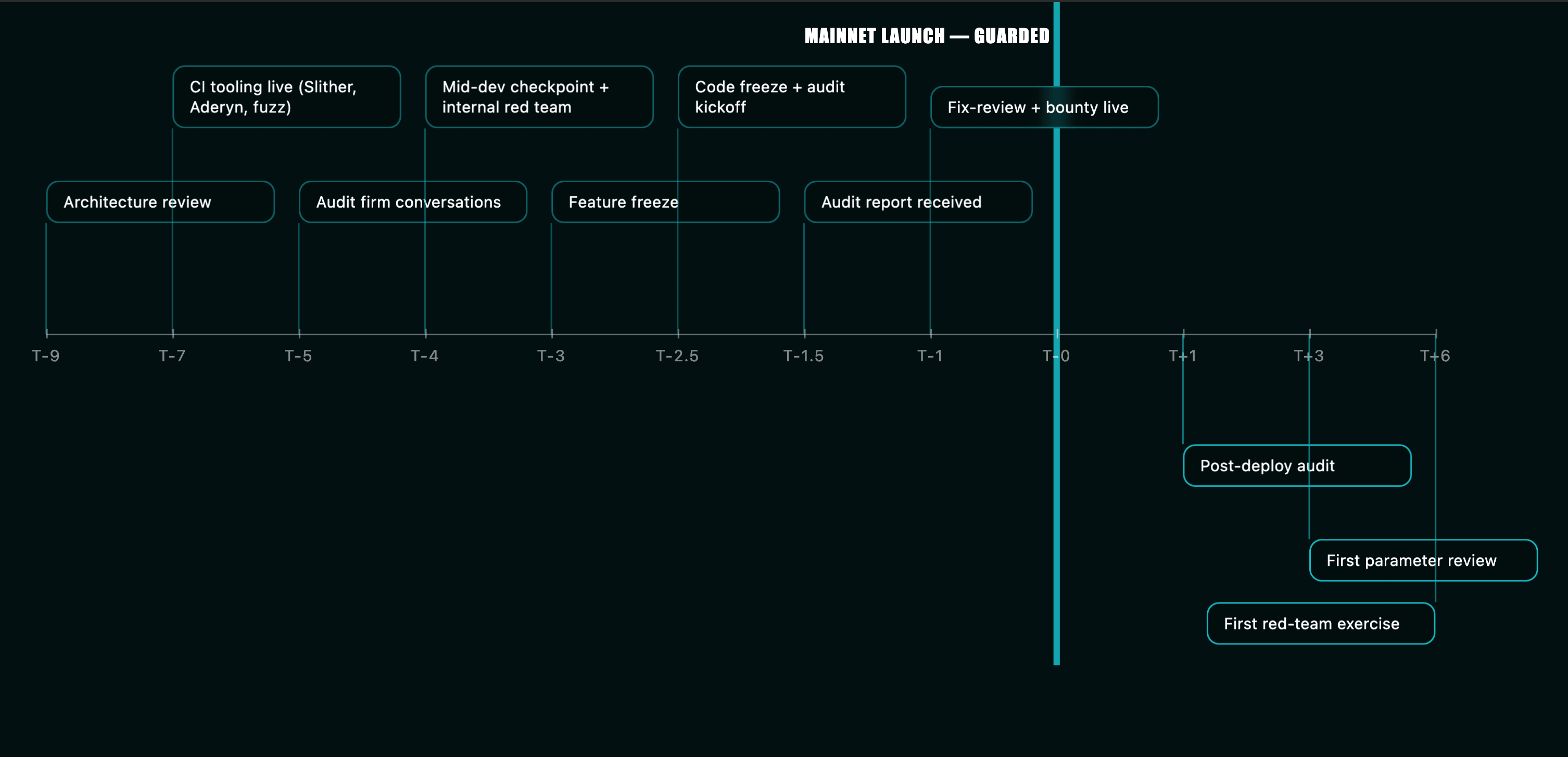

A practical calendar

For a typical mid-size DeFi protocol planning a Q4 mainnet, the security calendar looks like this.

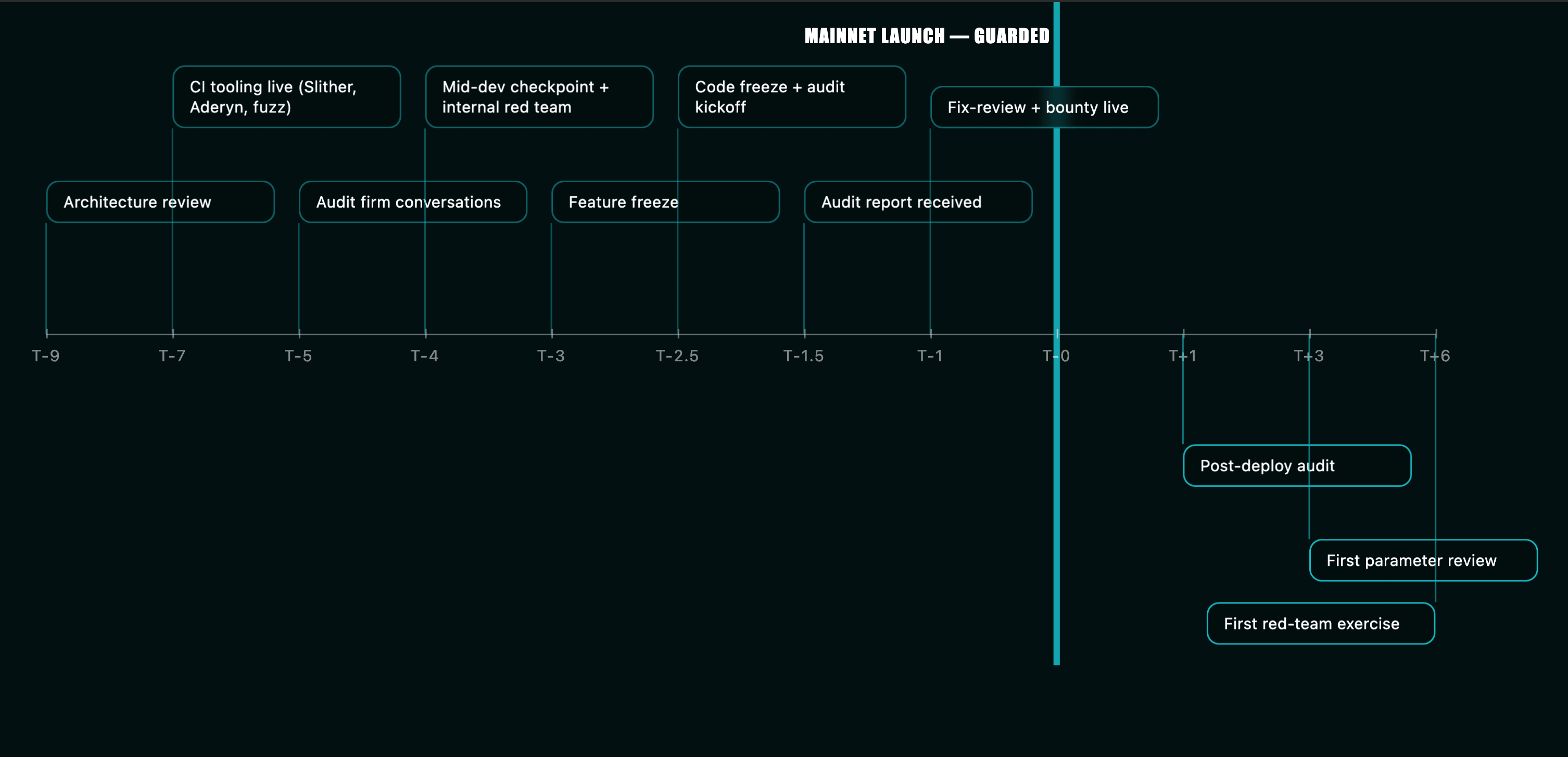

At T-9 to T-7 months, you finish the whitepaper, threat model, and architecture review (engagement #1 with your security partner). Establish invariants. From T-7 to T-4, active development continues with CI running Slither and Aderyn, and Echidna/Foundry invariant tests grow alongside the code. At T-5, you start conversations with audit firms and book the slot. At T-4, you run a mid-development security checkpoint and an internal red-team exercise. At T-3, feature freeze; final fuzzing campaign; documentation pass; pre-audit readiness review. At T-2.5, code freeze and audit kickoff. From T-2 to T-1.5, the audit runs — devs available, no functional changes. At T-1.5, audit report received, remediation begins. At T-1, fix-review or re-audit; public bounty live (or audit contest on post-fix code); final testnet exposure. At T-0, mainnet launch — guarded. Caps in place. Monitoring (Forta + Defender) active. War-room runbook published internally.

At T+1 month, post-deploy audit of deployed bytecode and configuration. Bug bounty cap raised. At T+3 months, first parameter review. Cap relaxation if no incidents. At T+6 months, first red-team exercise. Recurring audit retainer or contest for any upgrades shipped in the interim. Ongoing: every code change touching value gets reviewed. Monitoring tuned quarterly. Bug bounty scaled with TVL.

That timeline assumes nothing goes wrong. Things will go wrong. Build slack into every stage. Pad the buffer between audit and launch by at least a week beyond what you think you need.

Audits are a process, not a product

The teams that get exploited in 2026 won't be the teams that didn't audit. They'll be the teams that audited too late, audited too narrowly, audited once and never again, or treated the audit report as the artifact instead of the conversation. The teams that survive will be the ones who treat security as a continuous discipline distributed across the lifecycle — designed for, written for, tested for, audited for, monitored for, and responded to.

A reasonable budget heuristic: for every 50k-$150k on security in year one, split across architecture review, development tooling, formal verification where applicable, manual audit(s), bug bounty, and monitoring. That's one to two orders of magnitude cheaper than the cost of a mid-size exploit. And it buys you something more valuable than any individual audit report: a protocol whose security posture survives the next surprise.

When to audit? Earlier than you think. More often than you think. And forever after launch.

The calendar above is a starting point. Your protocol's specifics — chain, complexity, TVL trajectory, upgrade cadence — will shape it. But if you walk away with a clear mental model that an audit is one event in a program, not the program itself, you're already ahead of most of the protocols that will hit the headlines this year.

Get in touch with Zealynx

Zealynx Security audits Web3 protocols across Solidity, Solana, and emerging VMs. If you're planning a launch in the next two quarters and want to talk about timing — whether or not we end up being the right firm for your audit — get in touch with us. We'll help you map the calendar, scope the right cadence, and avoid the mistimed-audit failure modes that cost protocols millions.

FAQ: When and how often to audit

1. When should I start booking my smart contract audit firm?

For a Q4 mainnet, start firm conversations no later than late Q2 — about three months before your target audit start. Top-tier firms (OpenZeppelin, Trail of Bits, Spearbit, Cantina, Zellic, ChainSecurity) have booking queues of four to twelve weeks. Hold a slot six to eight weeks before code freeze, even if scope isn't 100% locked. Booking late means either delaying your launch or accepting a less experienced firm.

2. What is a code freeze, and why do auditors require one?

A code freeze is a public commitment to a specific commit hash where no functional changes are pushed during the audit window. Doc updates, comments, and test additions are allowed; refactors, new features, and scope changes are not. Auditors require this because every post-freeze change either invalidates findings or burns auditor hours rediscovering already-reviewed paths. If you must change something mid-audit, communicate immediately and expect the timeline to slip.

3. What is the difference between a private audit and a competitive contest?

A private audit is a multi-week engagement with two to four named auditors from a single firm, priced at 25k per engineer-week, with deep methodical coverage and direct dialogue with engineers. A competitive contest (Code4rena, Sherlock, CodeHawks, Cantina) runs as a fixed prize pool (500k+) over a 3-day to 4-week window with 30 to 500+ wardens, producing wide adversarial coverage. Mature teams layer them: private audit first for depth, contest second for breadth, bug bounty third for continuous coverage.

4. What is a guarded launch, and why does it matter on day one?

A guarded launch is a mainnet deployment with deliberate operational constraints active from block one: deposit caps, conservative parameters, a kill switch, pause guardians, and timelocks on admin actions. The point is to shrink the blast radius of any bug that survived the audit. Wormhole's 12M both came from initialization paths that a guarded launch would have caught — or at least contained — before exposure scaled.

5. How often should a live protocol get re-audited after mainnet?

Any code change touching contracts that hold user funds gets reviewed. Lightweight reviews (1-3 engineer-weeks) for incremental changes; full audits for protocol-version bumps; spot reviews triggered by new collateral, new chains, or new oracle integrations. Mature protocols structure this as a retainer (Venus + OpenZeppelin: 2.39M for v4 formal verification). Plan an additional red-team exercise every 6-12 months and a post-deploy audit of the actually-deployed bytecode within a month of launch.

6. What is "audit decay" and how do I prevent it?

Audit decay is the gradual divergence between a point-in-time audit report and the live protocol, caused by code changes, dependency upgrades, governance actions, and EVM-level shifts after the audit closes. Prevent it with continuous on-chain monitoring (Forta, Defender, Hypernative), runtime invariant enforcement (EIP-7265 circuit breakers), bug bounties scaled to TVL, recurring audits triggered by upgrades, and a rehearsed incident-response runbook. Protocols that pre-wire these controls recover 80-100% of funds from exploits; protocols that rely only on the audit rarely recover any.

Glossary

| Term | Definition |

|---|---|

| Audit Timeline | The full sequence of security activities scheduled across a protocol's lifecycle, from architecture review to post-deploy audit. |

| Audit Decay | The gradual divergence between a point-in-time audit report and the live protocol after code, dependency, or governance changes. |

| Audit Readiness | The state of a codebase being prepared for a formal audit: frozen code, test coverage, documented invariants. |

| SDLC | Software Development Life Cycle — the structured phases of planning, building, testing, and deploying smart contracts. |

| Circuit Breaker | An on-chain mechanism (e.g., EIP-7265) that pauses or rate-limits sensitive operations when an invariant is violated. |

| Bug Bounty | A continuous program rewarding external researchers for responsibly disclosing vulnerabilities, typically scaled to TVL. |

Get the DeFi Protocol Security Checklist

15 vulnerabilities every DeFi team should check before mainnet. Used by 30+ protocols.

No spam. Unsubscribe anytime.