Back to Blog

AuditWeb3 SecurityDeFiHacksSecurity Checklist

Post-audit security: why the audit is a commit hash, not a security posture

21 min

Every audit report you have ever received attests to a single thing: at commit

0xdeadbeef, a specific team of reviewers did not find certain classes of bugs inside a bounded time budget. That is a useful artifact. It is not a security posture.The distance between those two ideas is where protocols lose money. Euler passed six audits, held a 1M Immunefi bounty running for months, and lost $197M in March 2023 to a vulnerability introduced by a fix — itself audited — for a different bug reported via that bounty. Nomad's fatal

confirmAt[0x00] = 1 change went in during the audit window and passed post-remediation review. Ronin's November 2021 allowlist was never revoked after the promotional gas-sponsorship program ended; nobody was watching, and the six days between exploit and discovery came from a user complaining about a failed withdrawal.Audits answer a narrow question well. "Is our protocol secure?" is not that question.

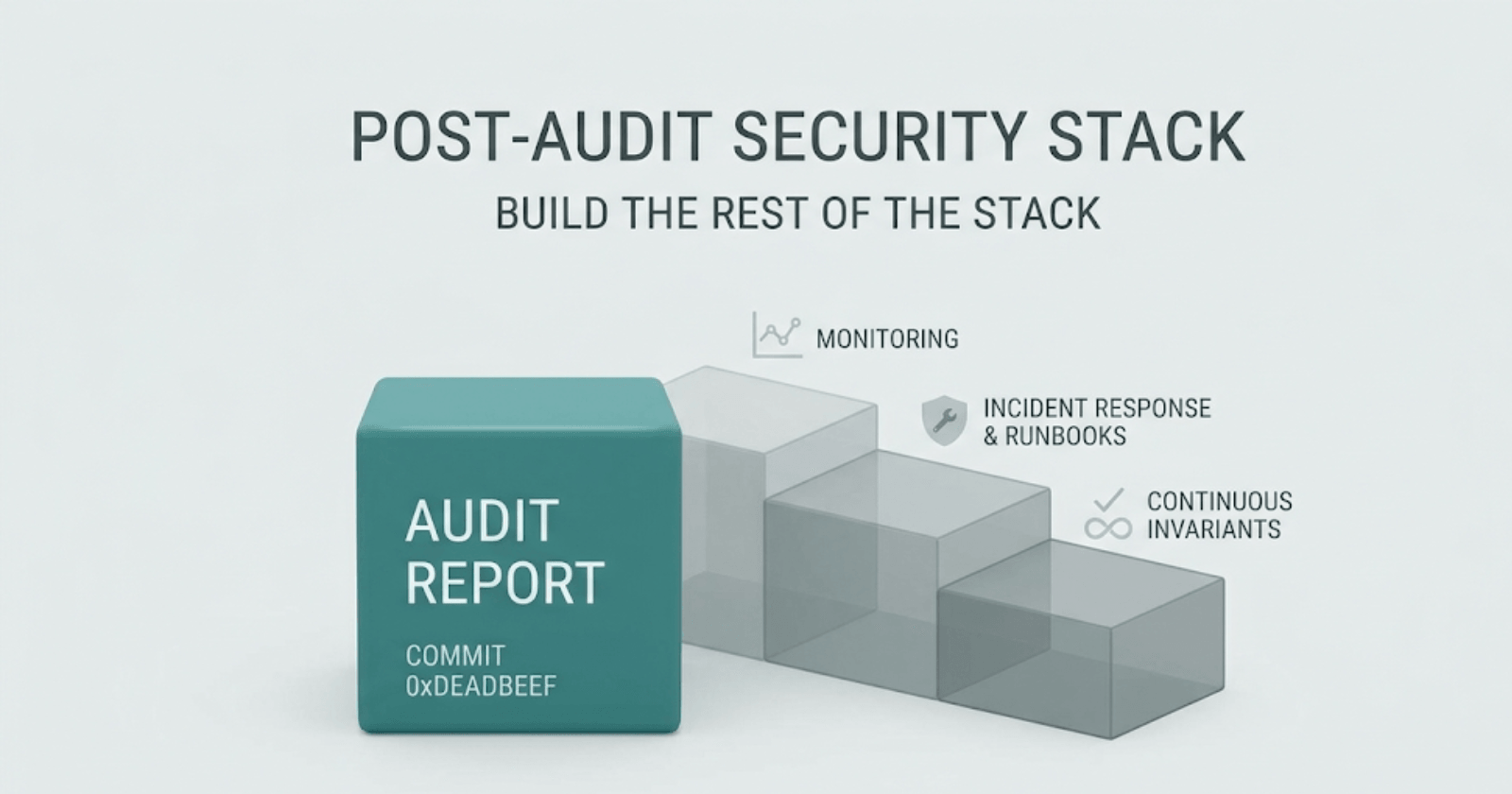

This post is for protocol teams who have just finished — or are about to finish — an audit, and now have to build the rest of the stack: monitoring, runbooks, automated pauses, continuous invariant checks, OpSec, and the organizational muscle that keeps funds in the contract between audit reports. It is deliberately practitioner-focused. Named incidents, dated, with root causes and what would have changed the outcome.

Why audits decay

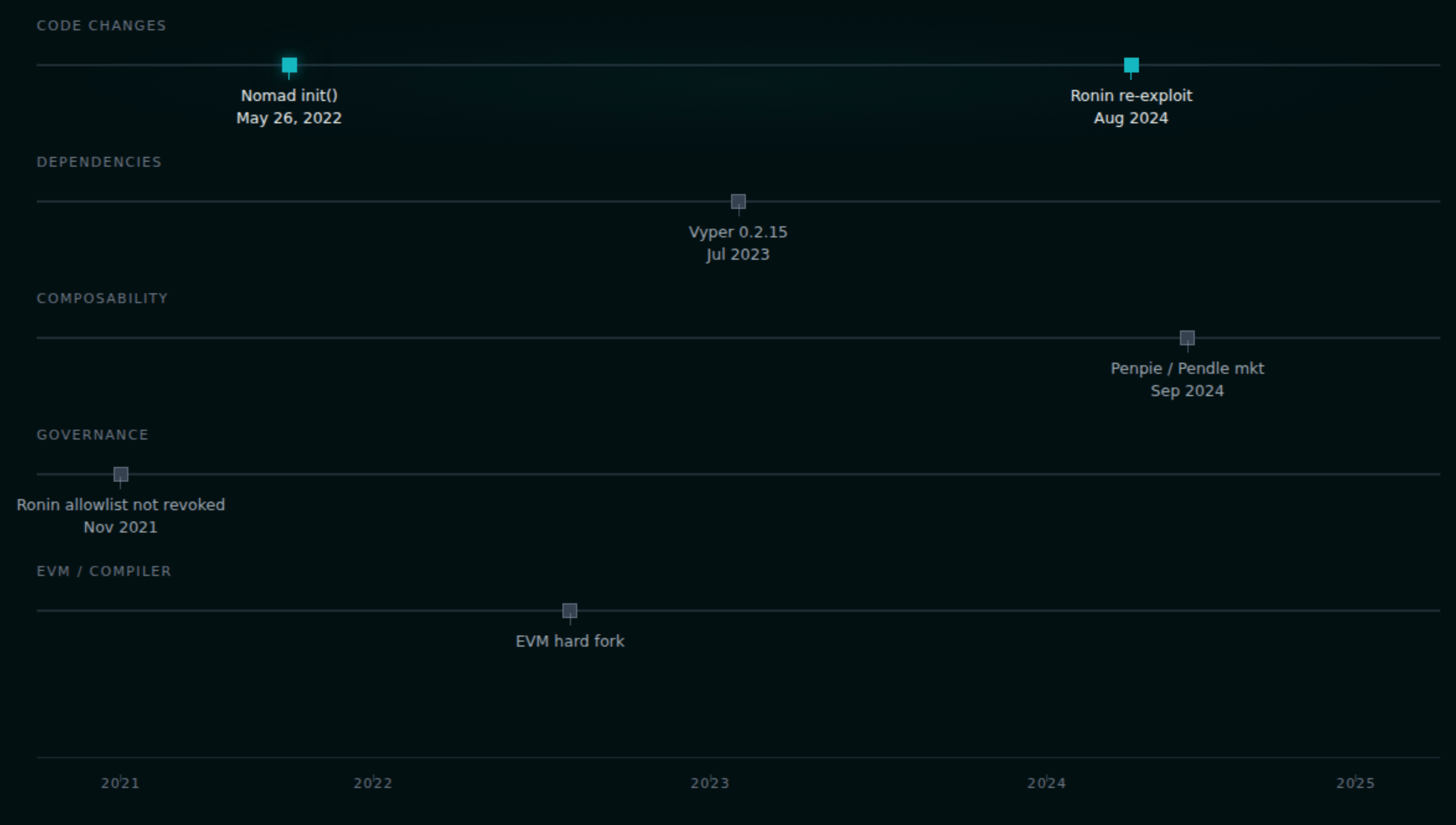

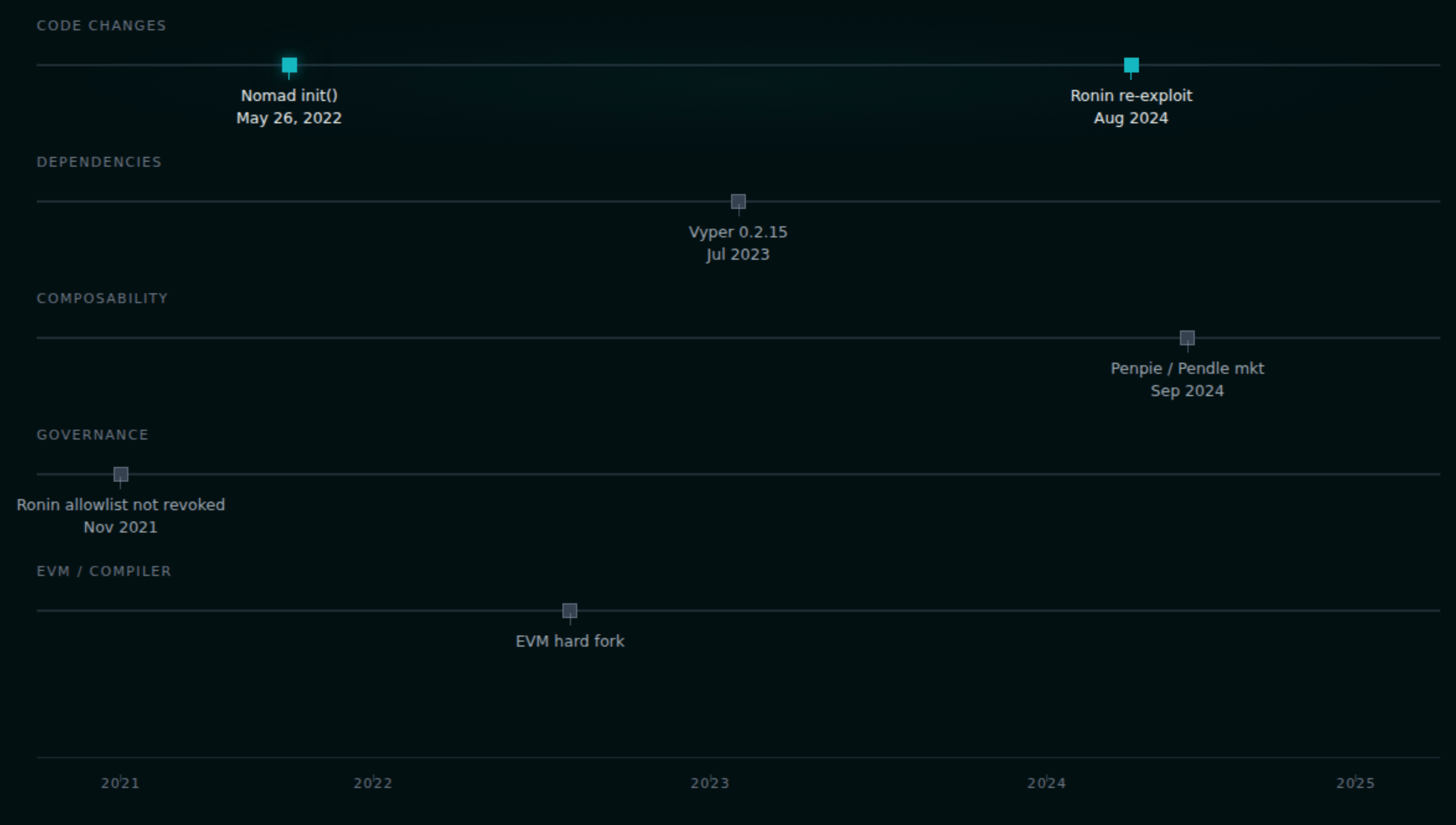

An audit attaches to a state of the world that stops existing the moment you ship. Zellic's "audit drift" research examined the top 20 Rekt Leaderboard incidents and found 15 were in unaudited or post-audit-modified code paths. The decay vectors are predictable:

Code changes. Nomad's May 26, 2022

initialize() change — setting the zero hash as a trusted root — was shipped mid-audit and survived remediation review. The August 2024 Ronin re-exploit ($12M) came from an upgrade that introduced an uninitialized _totalOperatorWeight, defaulting minimum vote weight to zero. Deployed without audit.Dependencies. The Vyper 0.2.15 / 0.2.16 / 0.3.0 compiler bug cost $73M across Curve pools in July 2023 despite those pools being individually audited. The bug was in the toolchain, not the source. Chainlink feed deprecations, Aave v2→v3 API changes, LST exchange rates assumed 1:1 — every integration you consume can invalidate your model.

Composability. Penpie lost $27M in September 2024 to a permissionless Pendle market registration feature added in May 2024 — after the last audit. Anyone could register a market with a malicious SY token, and the

_harvestBatchMarketRewards path re-entered it.Governance. Timelock parameter changes, new role grants, oracle registry swaps, new collateral onboarding — each of these is a new threat model.

EVM and compiler upgrades. The EEA EthTrust standard explicitly invalidates certification when the EVM version changes. Every hard fork is an audit-decay event for someone.

If none of that is news, good. The point is that post-audit security is not a vibe — it is the specific set of controls that keep a protocol safe while all of this decay is happening in the background. If you haven't yet, read our breakdown of 2025's biggest exploit lessons for the same pattern across last year's losses.

Monitoring: what to watch and why

On-chain monitoring is not SIEM with a different logo. The telemetry is globally observable and unforgeable, but lossy for intent — you see the payload, not the motive. Detection has to infer from sequences.

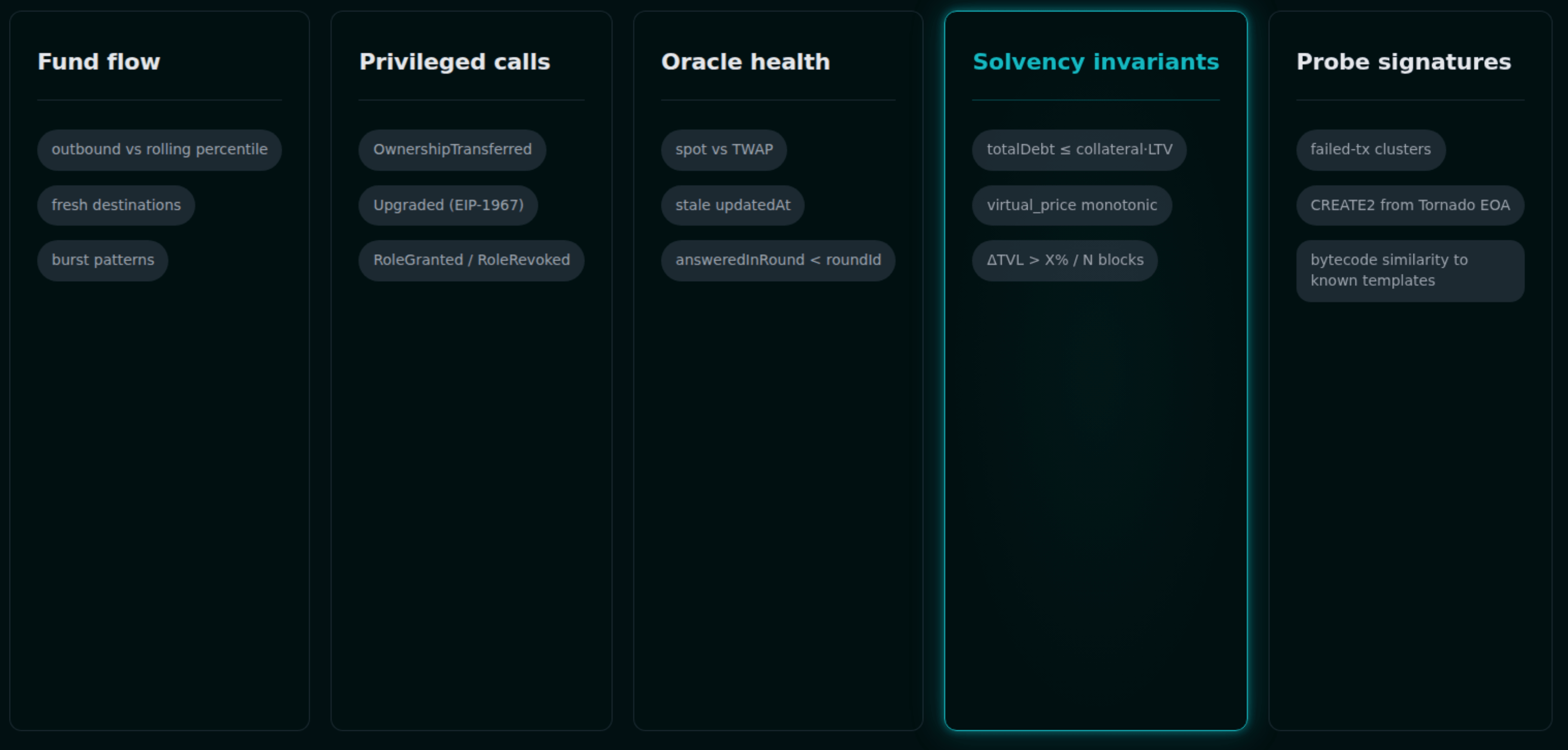

The signals that actually matter:

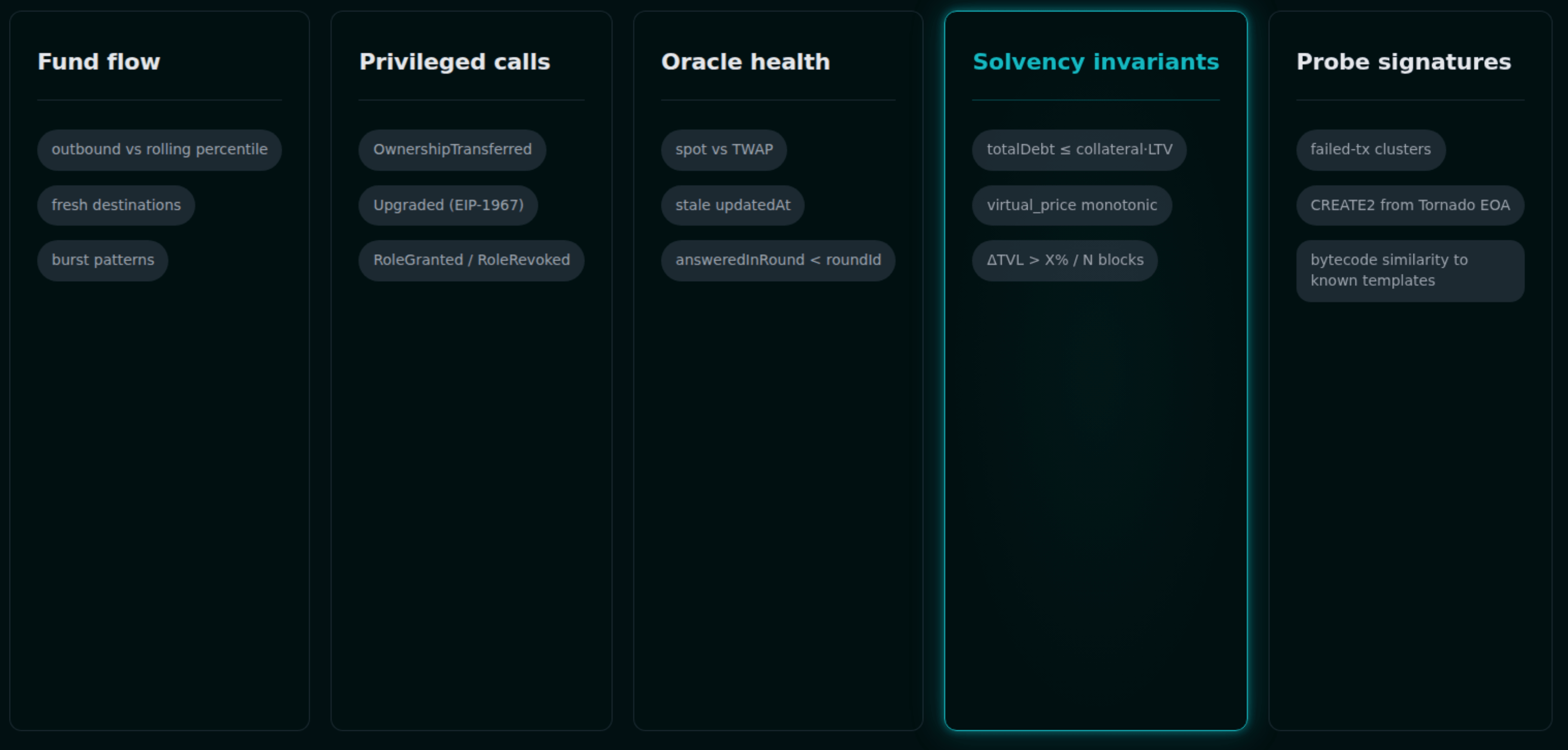

- Fund flow. Outbound transfers above a rolling percentile, destinations less than N blocks old, burst patterns, counterparties you have never seen. This is the floor, not the ceiling.

- Privileged calls.

OwnershipTransferred,Upgraded(EIP-1967 slot),RoleGranted/RoleRevoked, any call tosetImplementationor equivalent. Governance events —ProposalCreated,QueueTransaction,ExecuteTransaction, delegation-weight jumps. - Oracle health. Spot-versus-TWAP deviation, missing heartbeats,

answeredInRound < roundId, staleupdatedAt, L2 sequencer-uptime feed status. See our deeper dive on oracle manipulation for the attack-side view. - Solvency invariants.

totalDebt ≤ totalCollateral · LTV,virtual_pricemonotonicity, ΔTVL greater than X% in N blocks, reserves matching accounting. - Probe-phase signatures. Failed-tx clusters are often reentrancy or arithmetic-edge probing. CREATE2 deploys from Tornado-funded EOAs. Bytecode similarity to known exploit templates. Pending-mempool transactions targeting privileged selectors.

The platforms worth knowing about each occupy a different part of this space. Forta Network runs decentralized detection bots plus an ML ensemble (Malicious Contract ML, Tornado-funding, Victim ID bots); its Firewall product does pre-chain screening at the L2 sequencer level on Celo, Ink, and Plume. OpenZeppelin Defender (Monitors + Actions + Relayers) has the tightest integration with Safe-based governance — Compound, Synthetix, dYdX, and Yearn all rely on it. Hosted Defender sunsets July 1, 2026; the Relayer and Monitor components are going open-source.

Hypernative and Hexagate (acquired by Chainalysis in December 2024) are the current frontier for proprietary ML plus pre-transaction policy enforcement. Hypernative's Guardian and Hexagate's GateSigner both sit as co-signers on Safe transactions and reject payloads that violate policy before they are ever signed — which matters enormously given where attacks are actually happening in 2025–2026. Tenderly remains the go-to for simulation and war rooms, with the important caveat that its simulations were fooled during both Radiant and WazirX; the simulated tx and the signed tx were not the same.

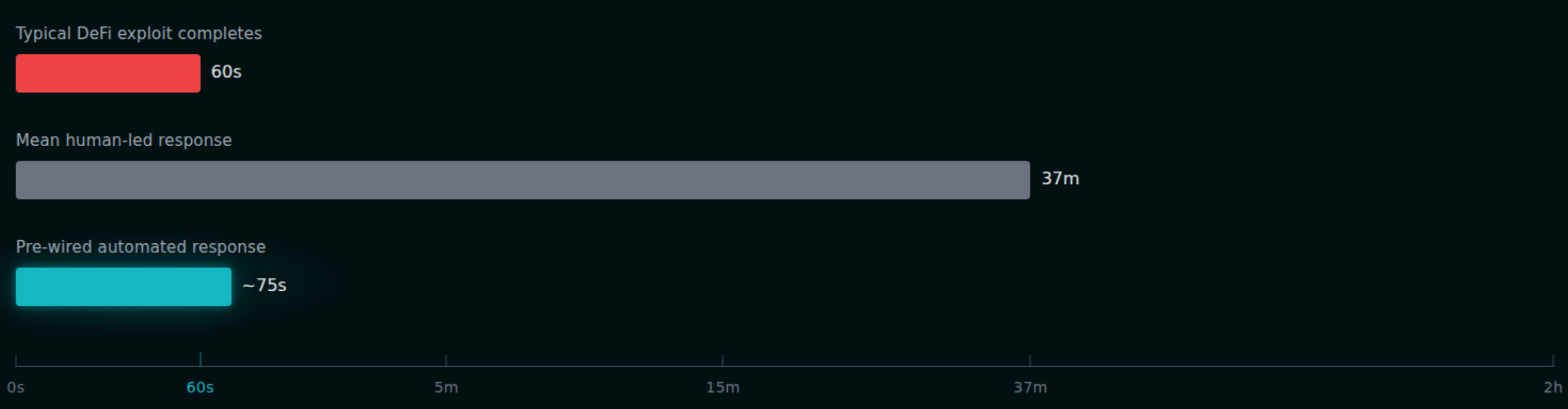

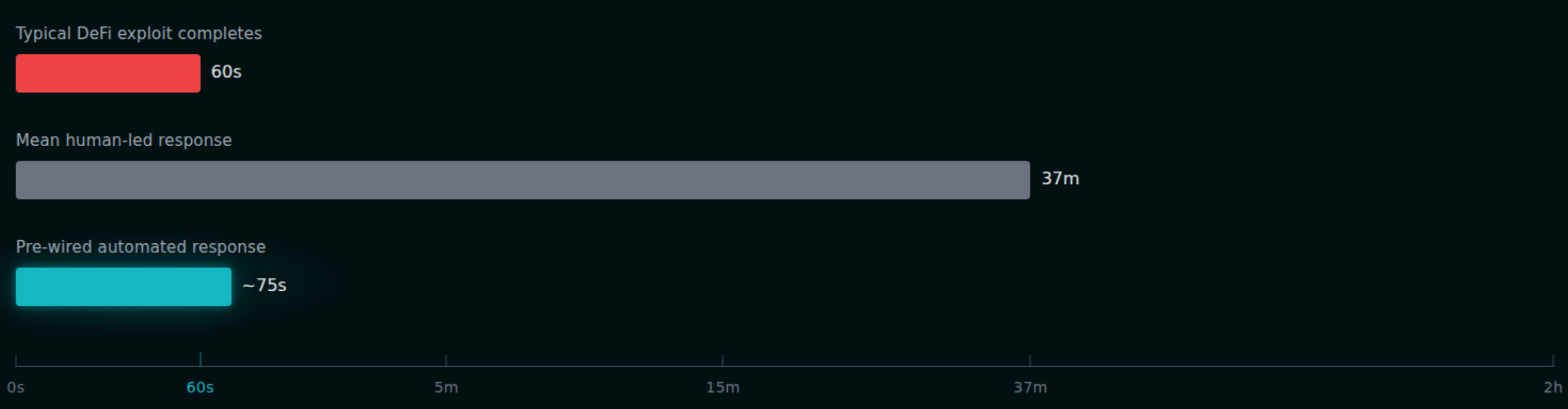

The ugly fact: a typical DeFi exploit completes in under 60 seconds. Mean human-led incident response is around 37 minutes. If you have not pre-wired automation, detection is forensics, not defense.

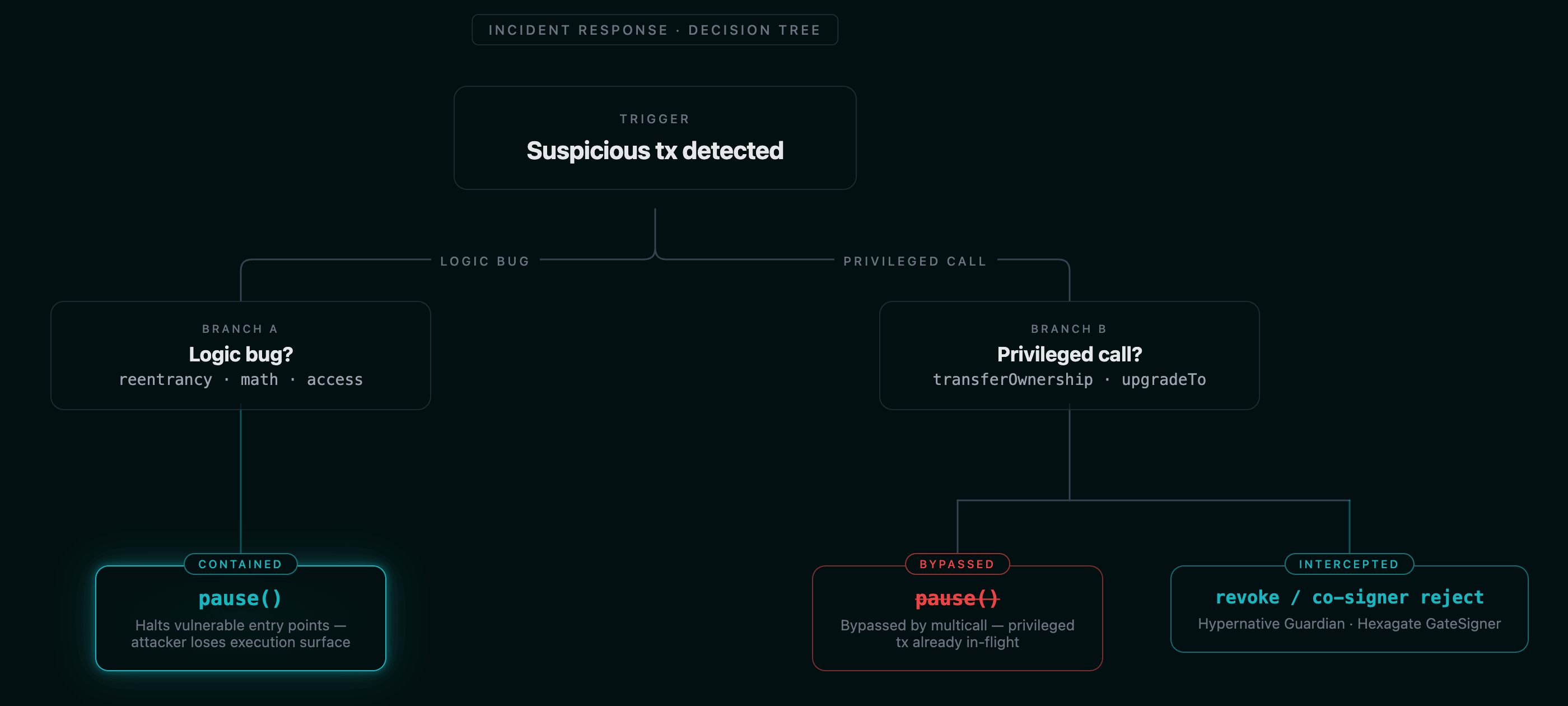

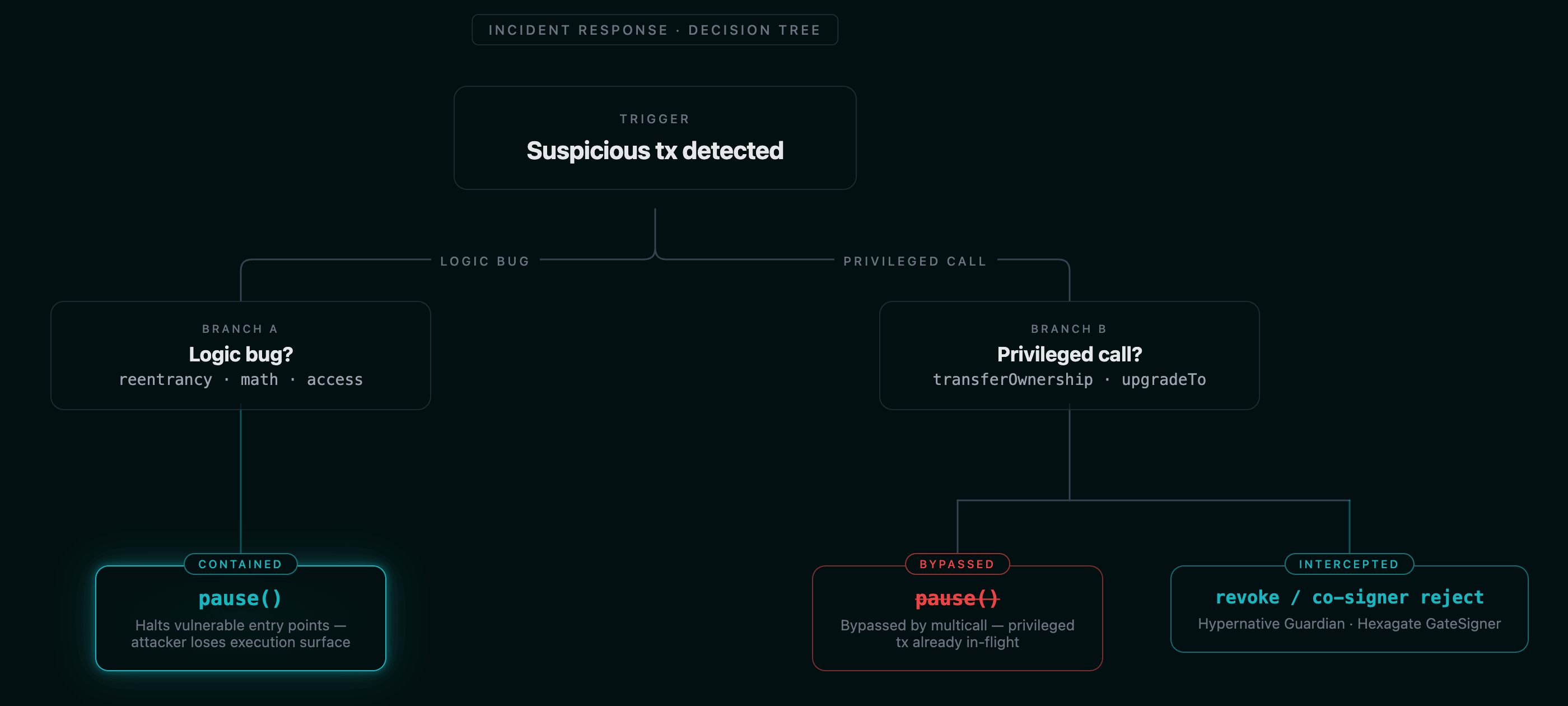

The limits of the pause button

Auto-pause is necessary and insufficient. It works beautifully against code-bug exploits — a reentrancy, a flash-loan manipulation, an oracle deviation. It is useless against access-control attacks, because an attacker who has taken ownership can upgrade past your pause guard. Radiant, October 2024: Hypernative's automated incident response fired on Ethereum and BSC, but the attacker's same-transaction

multicall([transferOwnership, upgradeTo]) bypassed the pause gate entirely on Arbitrum, where the drain had already completed. Pause is not protection when the compromised principal can mutate the protocol.The lesson is to wire monitoring to revocation, not just pause. If your IR automation detects a malicious governance action in flight, it should remove the compromised signer, revert ownership via a guardian role, or trigger an Arbitrum-style Security Council freeze — not just flip the pause flag and hope.

Production patterns worth copying:

- Compound's Pause Guardian — per-market, can disable mint/borrow/transfer/liquidate but never redeem/repay, cannot unpause (only governance can).

- Aave v3's Guardian architecture — 5-of-9 multisig, per-asset pause and freeze, plus distinct

EmergencyAdmin,FreezingSteward, andLiquidationsGracePeriodSentinelroles. - MakerDAO's Emergency Shutdown Module — raised to a 300,000 MKR threshold in July 2024 with a 48-hour GSM Pause Delay.

- Arbitrum's Security Council — 9-of-12 emergency threshold that bypasses all timelocks, used on April 21, 2026 to freeze $71.5M linked to the KelpDAO exploit via a temporary Inbox upgrade.

For the upgrade-path side of this problem, see our proxy security checklist and UUPS vs Transparent vs Beacon comparison.

Invariants that run forever

The single most underused post-audit control is continuous invariant checking. Not your audit suite. Not your CI fuzzing. Live-state invariants that run every block and halt the protocol when violated.

Three complementary patterns, all in production somewhere:

View-function invariants on-chain. A pure function returning bool —

totalDebt <= totalSupply * maxLTV, sum(userBalances) == totalSupply, virtualPrice >= lastVirtualPrice — polled every block by a Forta bot or Defender Sentinel, with auto-pause on violation.Forked-mainnet invariant suites on schedule. Tenderly Web3 Actions or Defender Actions fork mainnet nightly, run your Echidna or Medusa campaigns against real state, alert on regression. Trail of Bits' Curvance engagement ran a single 43-day Echidna/Medusa campaign; the underlying tooling now supports this cadence on mainnet forks.

Runtime invariants that revert. EIP-7265 standardized this: a circuit breaker that halts outflows above a configured threshold, with a choice between hard revert and delayed settlement. MakerDAO's fundamental-equation-of-DAI check and Aave Shield are the production precedents. This is the pattern that would have prevented many of 2023–2024's largest exploits.

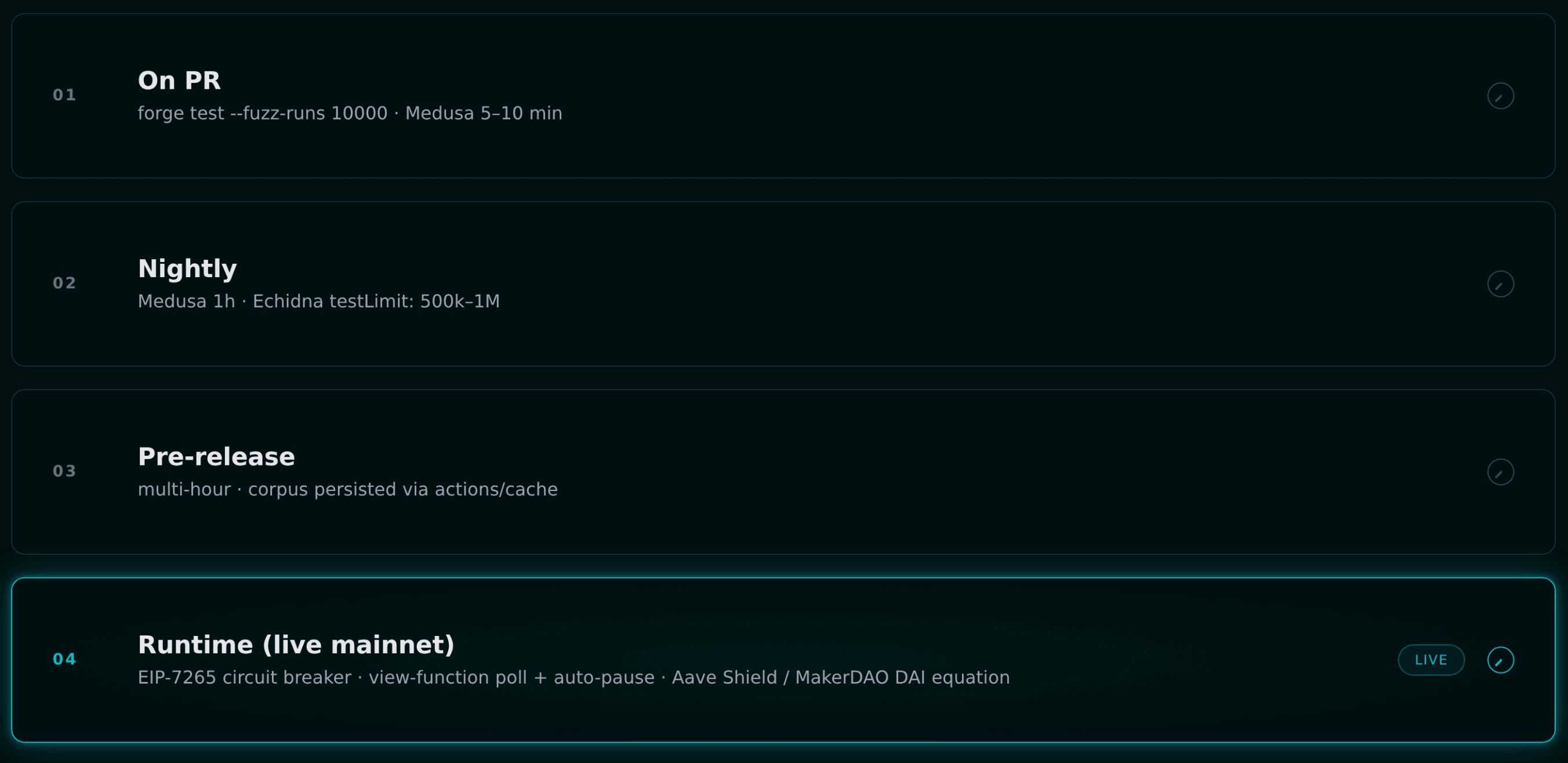

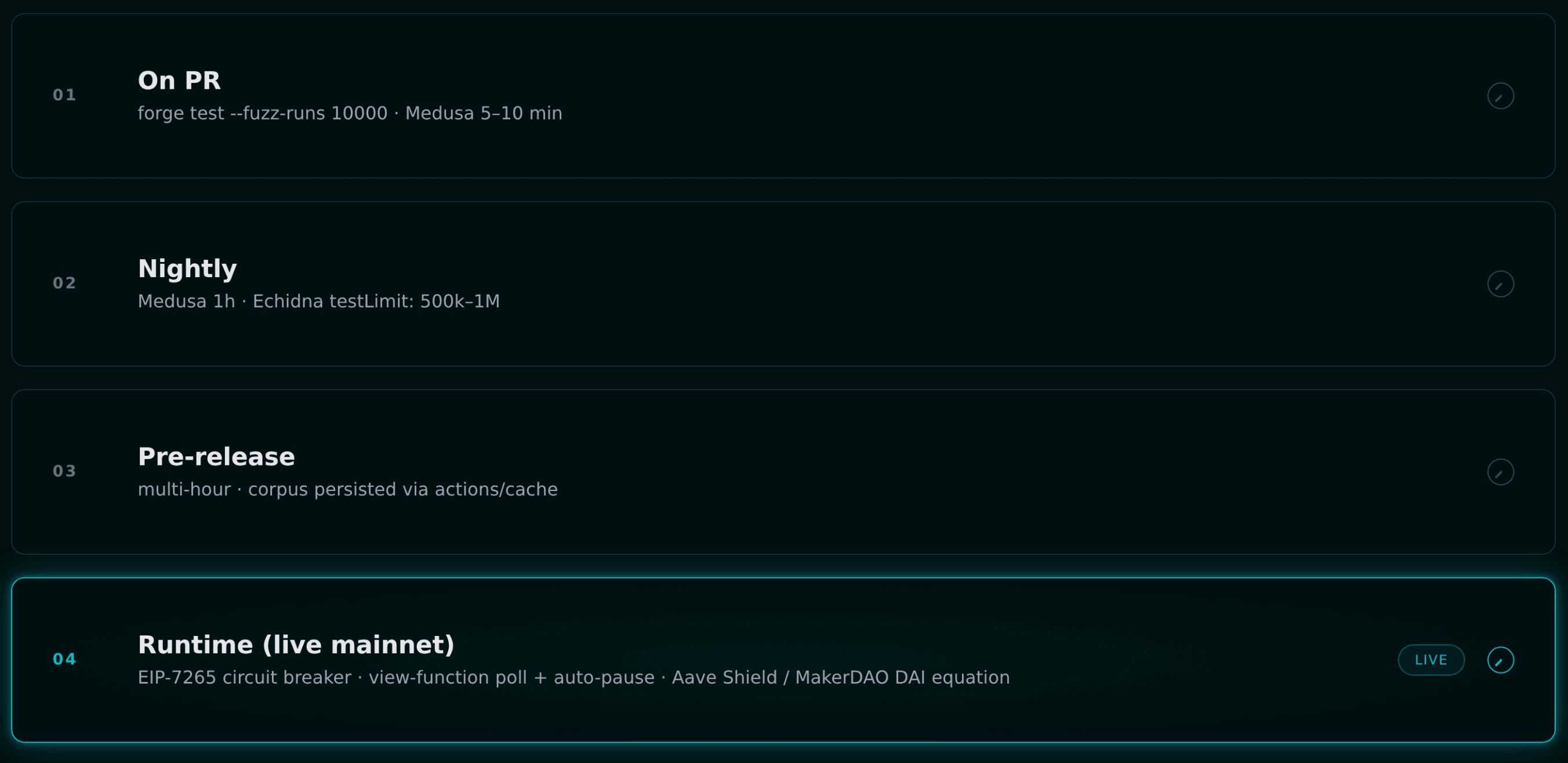

On the CI side, the tiering used at Aave, Morpho, Liquity, and Gearbox is roughly:

- PR trigger —

forge test --fuzz-runs 10000plus a five-to-ten-minute Medusa pass. - Nightly cron — Medusa for an hour, Echidna with

testLimit: 500k–1M. - Pre-release — multi-hour campaigns with corpus persisted via

actions/cache.

Certora runs on every commit at Aave since March 2022, continuously at Compound, at Lido on the Dual Governance Escrow, and at MakerDAO on the Fundamental Equation of DAI. The pattern is that formal verification is not a deliverable; it is a CI step. For practical entry points, see our articles on fuzz testing and formal verification with fuzzing.

The part nobody wants to talk about: keys

Private-key compromise was 43.8% of 2024's crypto losses. Personal-wallet compromise grew from 7.3% in 2022 to 44% in 2024. The frontier has moved off-chain.

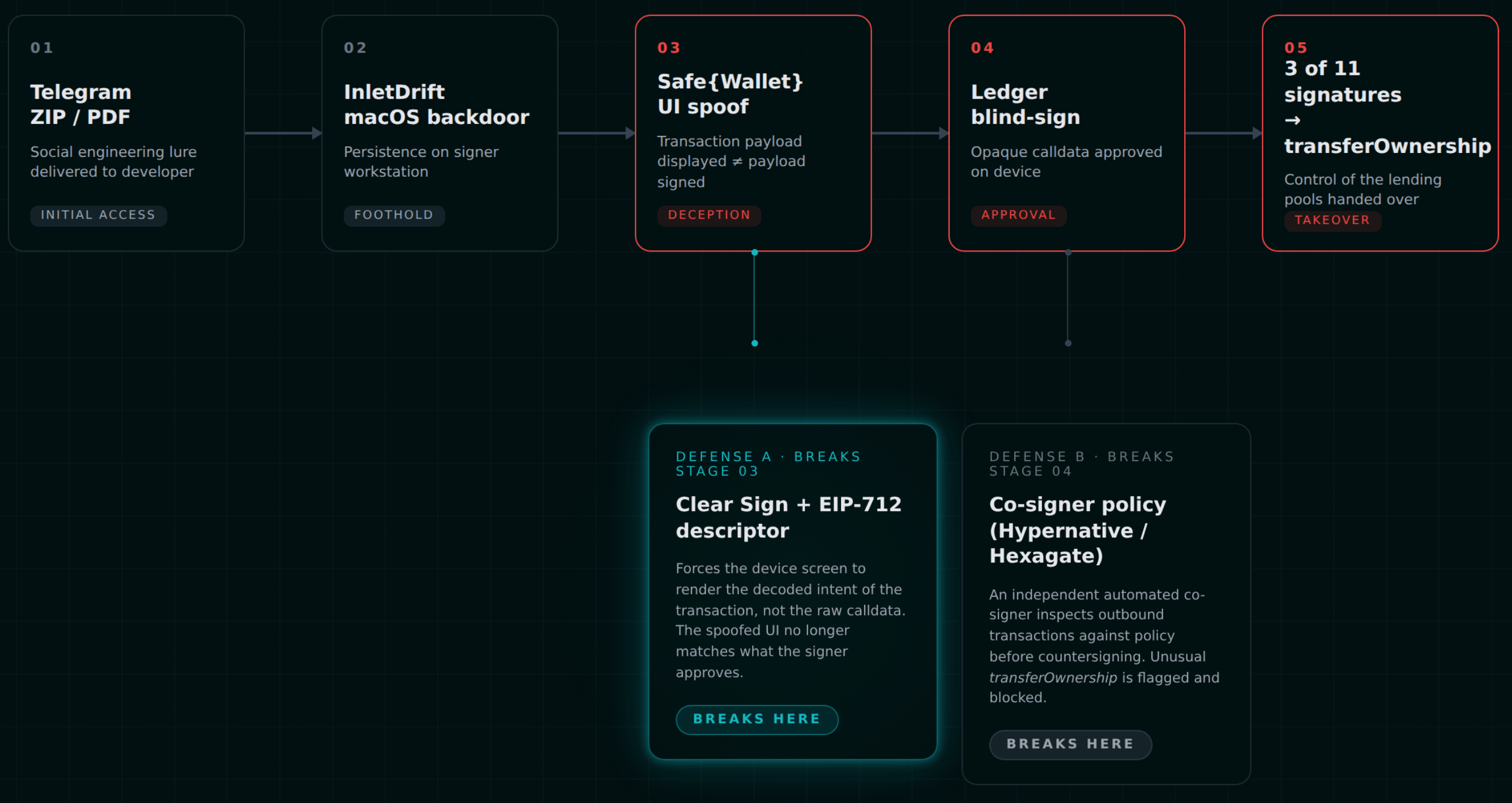

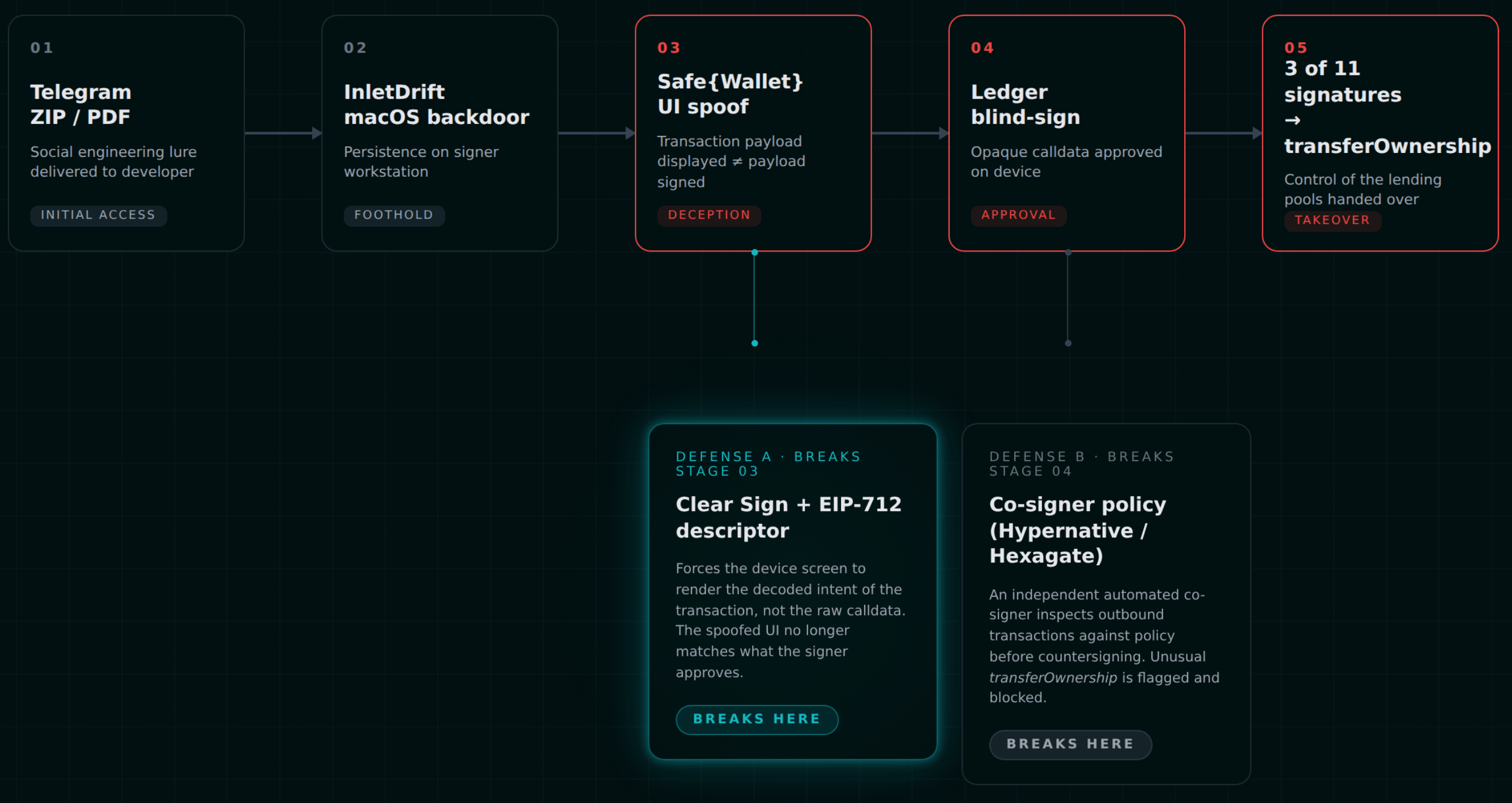

Radiant is the canonical case. On September 11, 2024, a contractor received a Telegram message with a ZIP/PDF attachment. The attachment dropped InletDrift, a macOS backdoor with OS-level persistence. When the contractor later signed Safe multisig transactions, Safe{Wallet}'s frontend displayed the legitimate payload while the Ledger received malicious

transferOwnership calldata. Ledger's blind-sign mode for Gnosis Safe transactions meant no on-device verification. Tenderly simulations showed nothing wrong because they simulated the displayed transaction. The attacker triggered "retry" loops and collected three signatures from an 11-person multisig with a threshold of 3 — 27% of signers to take the protocol. $53M gone.

The best practices that emerged, codified across SEAL and the major audit firms:

- Multisig math ≥60%. A 3-of-11 is an attack surface, not redundancy. Either raise the threshold or shrink N.

- Diverse hardware. Ledger, Trezor, and GridPlus on the same multisig, geographically distributed, so no single firmware vector compromises everyone.

- Ban blind-sign for Safe multisigs. Ledger Clear Sign JSON descriptors for EIP-712. Out-of-band calldata verification via

etherscan.io/inputdatadecoderbefore confirming on-device. - Co-signer policy enforcement. Hypernative Guardian and Hexagate GateSigner both act as programmable co-signers that reject transactions violating policy — a calldata selector allowlist, a destination allowlist, a size limit — before the human signers are ever asked.

- Atomic

swapOwnerrotation. Rotate signers as an atomic transaction, not sequential ones, to avoid a window where a compromised signer can still act. - Recurring failure equals abort. If a Safe transaction "fails" and a signer is asked to retry, that is an incident, not a retry.

Timelock norms are reasonably settled: Compound's canonical

MINIMUM_DELAY = 2 days, MAXIMUM_DELAY = 30 days, GRACE_PERIOD = 14 days; Uniswap 2 days; MakerDAO's GSM at 48 hours; Aave v3 Executor L1 ~1 day, L2 core ~7 days; Lido 48–72 hours. OpenZeppelin's TimelockController with salt and predecessor for ordering is the reference implementation.The first two hours are the whole game

Named incidents tell the story better than any framework. These are 0–2 hour outcomes:

| Incident | Loss | Outcome |

|---|---|---|

| Ronin (Mar 2022) | $625M | Six-day detection gap. Zero response. |

| Wormhole (Feb 2022) | $326M | Network restored in ~16h; Jump Crypto recapitalized same day; $140M recovered via Oasis counter-exploit Feb 2. |

| Poly Network (Aug 2021) | $611M | Tether froze $33M within hours; funds fully returned in 15 days. |

| Euler (Mar 2023) | $197M | War room plus TRM plus law enforcement plus on-chain negotiation; fully recovered. |

| Balancer (Aug 2023) | $11.7M at risk | 97%+ recovered in 48h via proportional exits; gold-standard post-mortem. |

| Munchables (Mar 2024) | $62.5M | Fully returned same day; Blast's 14-day withdrawal delay gave time to negotiate. |

| Venus (Sep 2025) | $13M saved | Hexagate detected anomaly 18h pre-attack, DAO-mediated freeze left attacker net-negative. |

| Radiant (Oct 2024) | $53M | Auto-pause bypassed by multicall; unrecovered; TVL collapsed from 5.8M. |

| Bybit (Feb 2025) | $1.5B | Largest crypto heist ever; OpSec failure at the Safe{Wallet} UI level. |

Get the DeFi Protocol Security Checklist

15 vulnerabilities every DeFi team should check before mainnet. Used by 30+ protocols.

No spam. Unsubscribe anytime.

The correlation is not subtle. Protocols with pre-wired automation, a SEAL 911 contact on file, an adopted Safe Harbor Agreement, and a rehearsed runbook recover 80–100% of funds. Protocols with only economic levers — CurveDAO could only kill gauges — depend on whitehat MEV bots and exchange freezes. Protocols with nothing get Ronin's six-day gap.

The incident response structure that works, codified in SEAL's playbooks: one Incident Commander who owns the clock, a Technical Lead for reproduction and patching, a Comms Lead who gatekeeps external statements (no premature "funds are safe"), a Legal/Regulatory Lead for law enforcement and exchange liaison, a single Whitehat/External Liaison to SEAL 911, and a Scribe timestamping everything in UTC. Strict role separation, one person per role, clocks running.

SEAL 911 itself is worth enrolling in before you need it. Telegram-based (

@seal_911_bot), roughly 40 vetted whitehats, authenticity verified by keyword-repeat through the bot, invite-only, free. More than 1M, 72-hour return window, KYC/OFAC post-decision. 20+ protocols, $68B+ covered. Uniswap, Aave, Pendle, Balancer, zkSync, Silo, Polymarket, and PancakeSwap are all in.For the governance-attack side of incident response, see our piece on DAO governance attacks.

The frameworks worth actually reading

Most security frameworks are too broad to be actionable for a protocol team. A few are genuinely useful:

- The Rekt Test (Trail of Bits, 2023) — twelve questions modeled on the Joel Test. "Do you have written and tested incident response plans?" "Do you require hardware security keys for production systems?" "Do your key invariants get tested on every commit?" If you can't answer yes to all twelve, you have a prioritization list.

- SEAL's framework collection at

frameworks.securityalliance.org— war room guidelines, communication templates, playbooks. Free. - OWASP SCSVS (current stewards: CredShields and OWASP, 2024 refactor with Ethereum Foundation ESP funding) — eleven control groups covering architecture, governance, oracle, bridge, DeFi, composability. The companion SCWE is CWE-style and searchable.

- EEA EthTrust Security Levels (v2, December 2023) — three levels: [S] automatic (Slither-checkable baseline), [M] manual audit review, [Q] quality including MEV protection and documented behavior. Certification is explicitly bound to specific EVM and compiler versions.

- OWASP Smart Contract Top 10 — the 2025 version ranks access control first (it accounted for $953M of 2024 losses). The 2026 draft adds Proxy and Upgradeability as a distinct category and elevates Business Logic to #2.

NIST Cybersecurity Framework 2.0 covers the off-chain perimeter; the Smart Contract Security Field Guide (

scsfg.io) covers the inside; ISO 27001 / SOC 2 anchor vendor review. The practical priority for a lean Web3 team is narrow: dedicated signing hardware, SRI and CSP and DNSSEC on frontends, and review the SOC 2 reports of anyone holding your RPC or CDN.What "mature" actually looks like

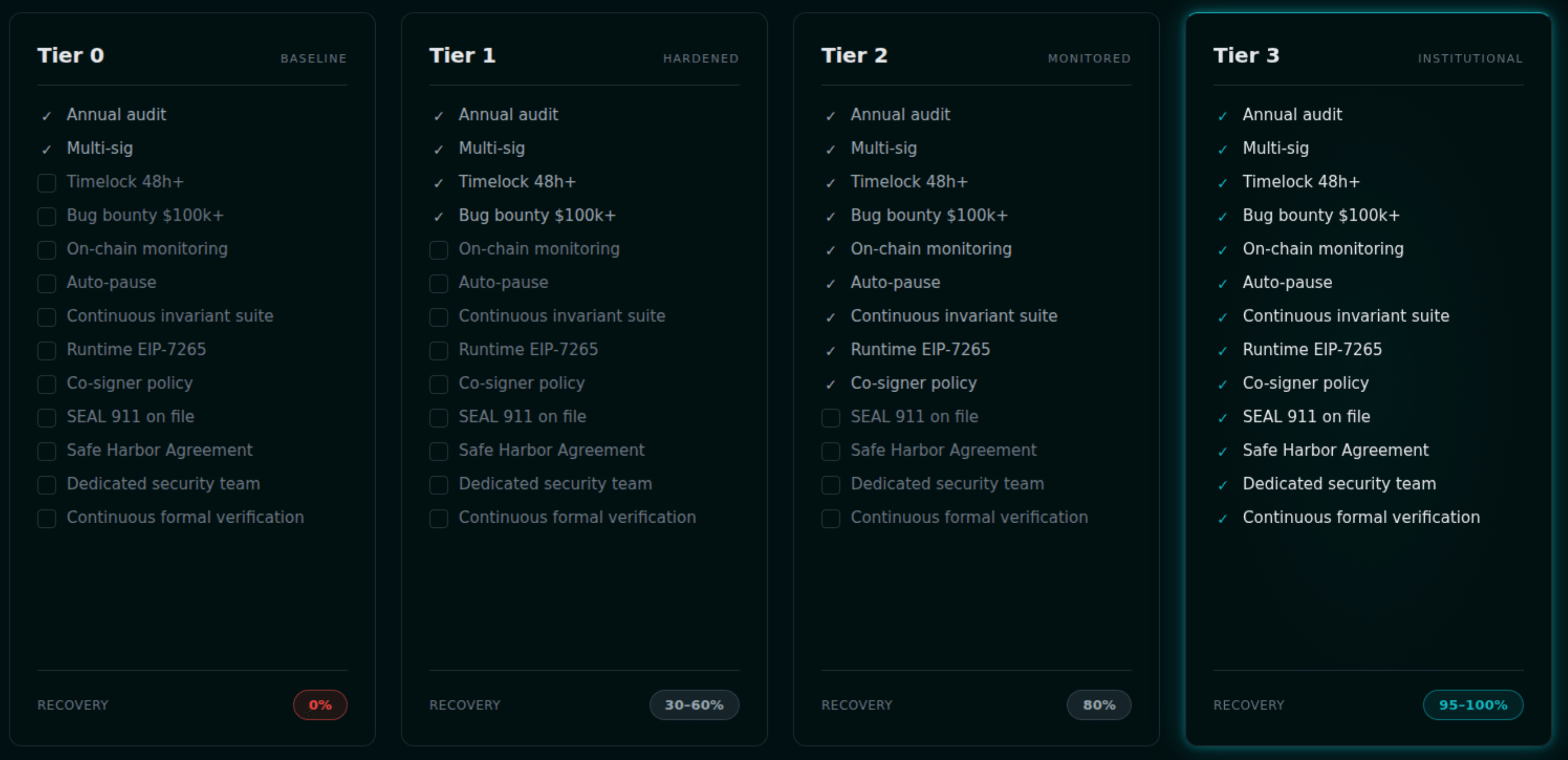

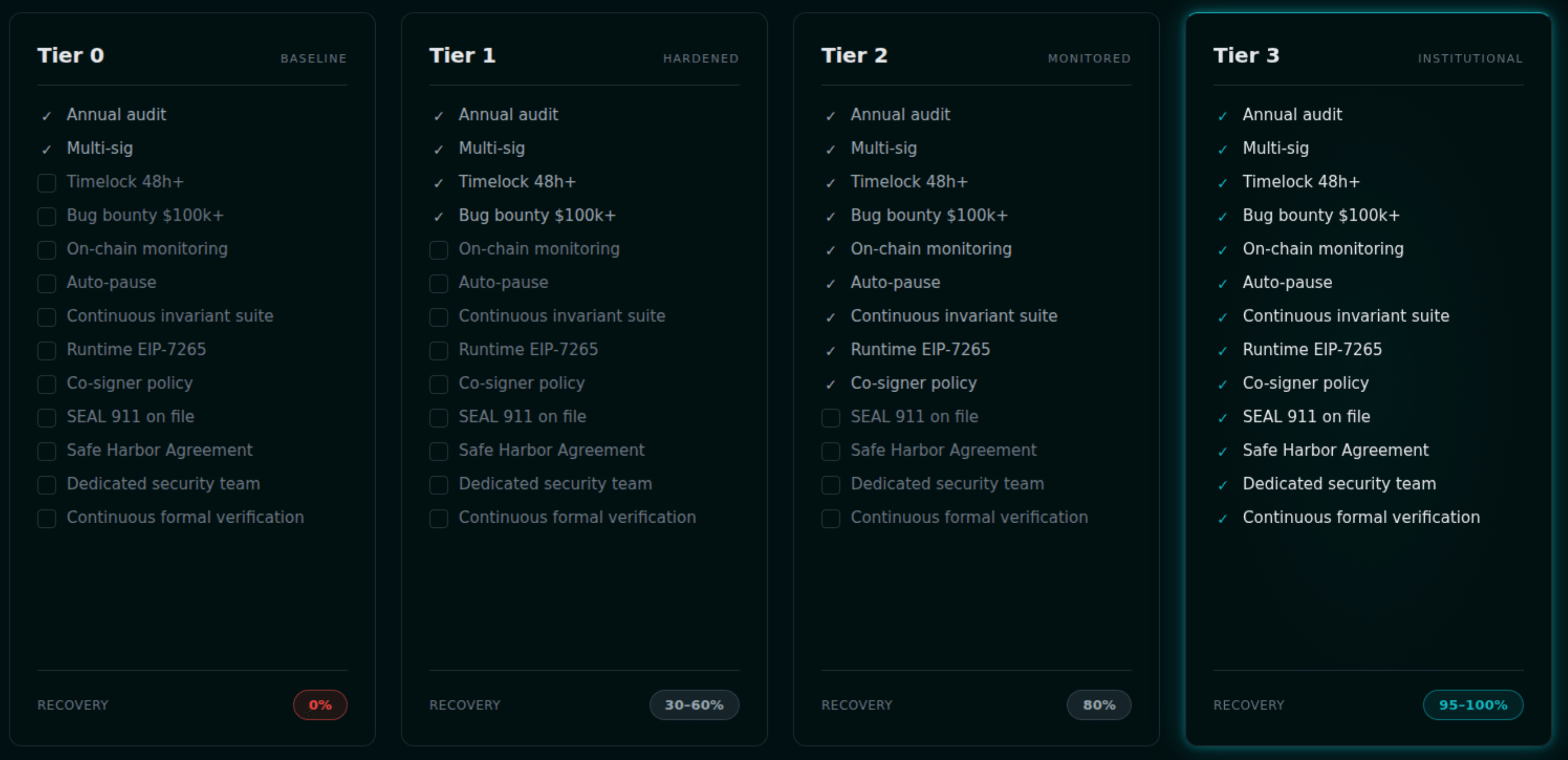

A maturity model for protocol security, distilled from what the protocols that survive their first major incident actually do:

- Tier 0 (Immature). Single pre-launch audit. 2-of-3 EOA multisig. No timelock. No bounty. No monitoring. Ad-hoc IR in a private Telegram.

- Tier 1 (Basic). Annual audits plus spot reviews on upgrades. 4-of-7 Safe with hardware wallets. 24–48h timelock. Immunefi bounty in the 1M range. Defender or Forta on admin functions. Documented runbook with defined roles.

- Tier 2 (Mature). Per-module audits on every significant change, with a lead-auditor retainer. 5-of-9 multisig, diverse hardware vendors, blind-sign mitigated, geographically distributed. Tiered timelock (48h to 7 days) plus an Emergency Shutdown Module. TVL-scaled bounty at $1–5M. Multi-vendor monitoring with custom invariant bots and auto-pause. Certora for critical invariants. Dedicated security engineer plus a head of security. Quarterly IR drills. SEAL 911 contact on file.

- Tier 3 (Best-in-class). Continuous firms rotating in CI. Role-separated Safes with MPC where applicable; elected security council. Dual Governance with rage-quit. $5–10M bounty plus Safe Harbor adopted on-chain. Multi-vendor monitoring with automated response plus runtime-enforced invariants. Continuous formal verification on every commit. Multiple IR vendors on retainer. Security team of three or more, red team, public post-mortem culture, SEAL-ISAC membership.

Very few protocols are at Tier 3. Aave's BGD Labs retainer, Lido's GRAPPA reviewer-in-residence plus asymmetric 4/6-or-1/6 Deposit Security Committee, MakerDAO's dedicated Immunefi Security Core Unit, Euler's post-hack redesign with 45 audits across 13 firms over 7 months — these are the protocols that survived or recovered from existential incidents and adapted. The gap between Tier 2 and Tier 3 is not money; it is organizational ownership. For the budget math behind the tiers, see our pieces on 2026 audit pricing and audit vs DeFi insurance.

The economic argument

Annual DeFi losses: 2021 around 3.8B (Ronin, BNB Bridge, Wormhole, Nomad, Mango). 2023 around 2.2B, with DPRK-linked activity at 61% of the total. 2025 at 1.5B accounting for 44% alone.

Mid-2026 market rates: a mid-complexity DeFi audit runs 5–30k per month; basic continuous monitoring 50–500k per year; a dedicated security engineer 350–600k. A single 500k-per-year monitoring. Radiant's TVL collapsed 98% after its 300M to $5.8M. The protocol was effectively destroyed.

The math is not complicated. It just requires treating the audit as step one of twenty, not twenty of twenty. The investor-facing version of this argument is in our audit ROI guide and investor due-diligence breakdown.

The closed loop

Detect → triage → respond → learn → harden. Every incident teaches you which invariant you should have been checking, which signer you should have removed, which rate limit you should have capped, which calldata you should have routed through a co-signer. Every post-mortem is a spec for next quarter's controls. The protocols that survive compound this knowledge; the ones that don't treat each incident as singular.

The audit is a commit hash. Security is what you do between commits.

Next step for protocol teams

Before you start bolting monitoring onto a running protocol, make sure you aren't paying for audit time that could have been saved upstream. Read our pre-audit checklist and our defense-in-depth workflow to see where the "between commits" layer fits in the full security lifecycle.

Get in touch

Zealynx builds the post-audit layer alongside the audit itself — monitoring invariants, runbooks, co-signer policies, and continuous review retainers so the stack keeps working between commits. If your protocol has just shipped, or is about to, we can help you wire the rest of the controls.

FAQ: Post-audit security

1. What does "audit decay" actually mean?

Audit decay is the gap that opens between a point-in-time audit report and the live protocol. The report attests to a specific commit, under specific assumptions (compiler, EVM version, dependencies, trust model). Every change after that — a hotfix, a new collateral, a dependency upgrade, a governance parameter — is outside the scope of what the auditors reviewed. Zellic's research showed 15 of the top 20 Rekt losses were in code paths that were either never audited or were modified after audit. Decay is the default state; staying secure requires controls that live beyond the report.

2. I already have an audit and a multisig. Isn't that enough?

An audit plus a multisig is table stakes, not a finished posture. The audit covers logic at a commit; the multisig covers privilege. Neither detects a live exploit, neither pauses outflows when solvency invariants break, neither stops a signer from blind-signing malicious calldata, and neither ensures the 37-minute mean human response time beats the 60-second exploit completion time. The full stack is monitoring with auto-response, continuous invariants (some runtime-enforced per EIP-7265), key-compromise controls (clear-sign, co-signer policy, diverse hardware), and a rehearsed incident-response runbook with SEAL 911 on file. Audit + multisig is the floor; mature protocols layer 5–6 more controls on top.

3. What is a runtime invariant, and how is it different from an invariant test?

An invariant test is an offline fuzzer — Echidna, Medusa, Foundry — trying random call sequences against a property you defined (e.g., "total debt never exceeds total collateral times max LTV"). It runs pre-deployment and in CI. A runtime invariant is the same property checked on live mainnet state, every block, with automatic action on violation. The property is either polled off-chain by a Forta bot or OpenZeppelin Defender Sentinel (which then triggers pause), or enforced on-chain per EIP-7265's circuit-breaker standard (the transaction reverts when the invariant would break). MakerDAO's fundamental equation of DAI and Aave Shield are the production precedents. Invariant tests prevent shipping a broken protocol; runtime invariants prevent draining a working one.

4. What is SEAL 911 and the Safe Harbor Agreement, and should we be enrolled?

SEAL 911 is a Telegram-based emergency contact network run by the Security Alliance — roughly 40 vetted whitehat responders reachable via

@seal_911_bot, invite-only, free. Since its February 2024 launch, it has helped coordinate over 1M) and a 72-hour return window with post-decision KYC/OFAC checks. Over 20 protocols ($68B+ TVL combined) have adopted it, including Uniswap, Aave, Pendle, and Balancer. Enroll before you need it. Adopting it during an incident is too late.5. Why did Radiant's auto-pause fail, and how do we avoid the same mistake?

Radiant's pause triggered correctly on Ethereum and BSC, but the attacker compressed the full attack into a single Arbitrum transaction using

multicall([transferOwnership, upgradeTo, ...]). By the time pause would have fired, ownership had already moved and the contract had been upgraded to a malicious implementation. The fix is to design privilege architecture so pause is not your only lever. Use a guardian role that can revoke ownership, a timelock on transferOwnership and upgradeTo, a co-signer policy (Hypernative Guardian or Hexagate GateSigner) that rejects privileged calldata before the multisig ever signs, and an Arbitrum-style security council with emergency freeze power. Pause protects you from logic bugs; revocation and co-sign protect you from compromised signers.6. How do I justify the post-audit security budget to leadership?

Frame the comparison in concrete numbers, not abstractions. A mid-complexity audit costs 50–500k per year. A dedicated security engineer is 350–600k. A single 500k/year monitoring line item. Radiant lost 98% of its TVL after its 1.5B in February 2025 to a frontend/OpSec failure, not a smart-contract bug. Protocols that pre-wire automation and have a rehearsed runbook recover 80–100% of funds; protocols that don't recover zero. This isn't "what if" — it's the 2021–2025 base rate. For the investor-facing version of this argument, see our audit ROI article.

Glossary

| Term | Definition |

|---|---|

| Audit Decay | Gradual divergence between an audit report and the live protocol as code, dependencies, and governance change. |

| Incident Response | Coordinated process of detecting, triaging, and recovering from an active protocol exploit or compromise. |

| Circuit Breaker | Runtime control that halts or delays outflows when predefined invariants are violated (see EIP-7265). |

| Invariant | A property the protocol must always satisfy; violated invariants signal a bug or exploit. |

| Blind Signing | Signing a transaction whose contents the hardware wallet cannot verify, enabling payload-swap attacks. |

| Timelock | Delay enforced between a governance action being proposed and executed, giving users a window to react. |

Get the DeFi Protocol Security Checklist

15 vulnerabilities every DeFi team should check before mainnet. Used by 30+ protocols.

No spam. Unsubscribe anytime.